Public relations

‘We know little about the effect of diet on health. That’s why so much is written about it’. That is the title of a post in which I advocate the view put by John Ioannidis that remarkably little is known about the health effects if individual nutrients. That ignorance has given rise to a vast industry selling advice that has little evidence to support it.

The 2016 Conference of the so-called "College of Medicine" had the title "Food, the Forgotten Medicine". This post gives some background information about some of the speakers at this event. I’m sorry it appears to be too ad hominem, but the only way to judge the meeting is via the track record of the speakers.

Quite a lot has been written here about the "College of Medicine". It is the direct successor of the Prince of Wales’ late, unlamented, Foundation for Integrated Health. But unlike the latter, its name is disguises its promotion of quackery. Originally it was going to be called the “College of Integrated Health”, but that wasn’t sufficently deceptive so the name was dropped.

For the history of the organisation, see

Don’t be deceived. The new “College of Medicine” is a fraud and delusion

The College of Medicine is in the pocket of Crapita Capita. Is Graeme Catto selling out?

The conference programme (download pdf) is a masterpiece of bait and switch. It is a mixture of very respectable people, and outright quacks. The former are invited to give legitimacy to the latter. The names may not be familiar to those who don’t follow the antics of the magic medicine community, so here is a bit of information about some of them.

The introduction to the meeting was by Michael Dixon and Catherine Zollman, both veterans of the Prince of Wales Foundation, and both devoted enthusiasts for magic medicne. Zollman even believes in the battiest of all forms of magic medicine, homeopathy (download pdf), for which she totally misrepresents the evidence. Zollman works now at the Penny Brohn centre in Bristol. She’s also linked to the "Portland Centre for integrative medicine" which is run by Elizabeth Thompson, another advocate of homeopathy. It came into being after NHS Bristol shut down the Bristol Homeopathic Hospital, on the very good grounds that it doesn’t work.

Now, like most magic medicine it is privatised. The Penny Brohn shop will sell you a wide range of expensive and useless "supplements". For example, Biocare Antioxidant capsules at £37 for 90. Biocare make several unjustified claims for their benefits. Among other unnecessary ingredients, they contain a very small amount of green tea. That’s a favourite of "health food addicts", and it was the subject of a recent paper that contains one of the daftest statistical solecisms I’ve ever encountered

"To protect against type II errors, no corrections were applied for multiple comparisons".

If you don’t understand that, try this paper.

The results are almost certainly false positives, despite the fact that it appeared in Lancet Neurology. It’s yet another example of broken peer review.

It’s been know for decades now that “antioxidant” is no more than a marketing term, There is no evidence of benefit and large doses can be harmful. This obviously doesn’t worry the College of Medicine.

Margaret Rayman was the next speaker. She’s a real nutritionist. Mixing the real with the crackpots is a standard bait and switch tactic.

Eleni Tsiompanou, came next. She runs yet another private "wellness" clinic, which makes all the usual exaggerated claims. She seems to have an obsession with Hippocrates (hint: medicine has moved on since then). Dr Eleni’s Joy Biscuits may or may not taste good, but their health-giving properties are make-believe.

Andrew Weil, from the University of Arizona

gave the keynote address. He’s described as "one of the world’s leading authorities on Nutrition and Health". That description alone is sufficient to show the fantasy land in which the College of Medicine exists. He’s a typical supplement salesman, presumably very rich. There is no excuse for not knowing about him. It was 1988 when Arnold Relman (who was editor of the New England Journal of Medicine) wrote A Trip to Stonesville: Some Notes on Andrew Weil, M.D..

“Like so many of the other gurus of alternative medicine, Weil is not bothered by logical contradictions in his argument, or encumbered by a need to search for objective evidence.”

This blog has mentioned his more recent activities, many times.

Alex Richardson, of Oxford Food and Behaviour Research (a charity, not part of the university) is an enthusiast for omega-3, a favourite of the supplement industry, She has published several papers that show little evidence of effectiveness. That looks entirely honest. On the other hand, their News section contains many links to the notorious supplement industry lobby site, Nutraingredients, one of the least reliable sources of information on the web (I get their newsletter, a constant source of hilarity and raised eyebrows). I find this worrying for someone who claims to be evidence-based. I’m told that her charity is funded largely by the supplement industry (though I can’t find any mention of that on the web site).

Stephen Devries was a new name to me. You can infer what he’s like from the fact that he has been endorsed byt Andrew Weil, and that his address is "Institute for Integrative Cardiology" ("Integrative" is the latest euphemism for quackery). Never trust any talk with a title that contains "The truth about". His was called "The scientific truth about fats and sugars," In a video, he claims that diet has been shown to reduce heart disease by 70%. which gives you a good idea of his ability to assess evidence. But the claim doubtless helps to sell his books.

Prof Tim Spector, of Kings College London, was next. As far as I know he’s a perfectly respectable scientist, albeit one with books to sell, But his talk is now online, and it was a bit like a born-again microbiome enthusiast. He seemed to be too impressed by the PREDIMED study, despite it’s statistical unsoundness, which was pointed out by Ioannidis. Little evidence was presented, though at least he was more sensible than the audience about the uselessness of multivitamin tablets.

Simon Mills talked on “Herbs and spices. Using Mother Nature’s pharmacy to maintain health and cure illness”. He’s a herbalist who has featured here many times. I can recommend especially his video about Hot and Cold herbs as a superb example of fantasy science.

Annie Anderson, is Professor of Public Health Nutrition and

Founder of the Scottish Cancer Prevention Network. She’s a respectable nutritionist and public health person, albeit with their customary disregard of problems of causality.

Patrick Holden is chair of the Sustainable Food Trust. He promotes "organic farming". Much though I dislike the cruelty of factory farms, the "organic" industry is largely a way of making food more expensive with no health benefits.

The Michael Pittilo 2016 Student Essay Prize was awarded after lunch. Pittilo has featured frequently on this blog as a result of his execrable promotion of quackery -see, in particular, A very bad report: gamma minus for the vice-chancellor.

Nutritional advice for patients with cancer. This discussion involved three people.

Professor Robert Thomas, Consultant Oncologist, Addenbrookes and Bedford Hospitals, Dr Clare Shaw, Consultant Dietitian, Royal Marsden Hospital and Dr Catherine Zollman, GP and Clinical Lead, Penny Brohn UK.

Robert Thomas came to my attention when I noticed that he, as a regular cancer consultant had spoken at a meeting of the quack charity, “YestoLife”. When I saw he was scheduled tp speak at another quack conference. After I’d written to him to point out the track records of some of the people at the meeting, he withdrew from one of them. See The exploitation of cancer patients is wicked. Carrot juice for lunch, then die destitute. The influence seems to have been temporary though. He continues to lend respectability to many dodgy meetings. He edits the Cancernet web site. This site lends credence to bizarre treatments like homeopathy and crystal healing. It used to sell hair mineral analysis, a well-known phony diagnostic method the main purpose of which is to sell you expensive “supplements”. They still sell the “Cancer Risk Nutritional Profile”. for £295.00, despite the fact that it provides no proven benefits.

Robert Thomas designed a food "supplement", Pomi-T: capsules that contain Pomegranate, Green tea, Broccoli and Curcumin. Oddly, he seems still to subscribe to the antioxidant myth. Even the supplement industry admits that that’s a lost cause, but that doesn’t stop its use in marketing. The one randomised trial of these pills for prostate cancer was inconclusive. Prostate Cancer UK says "We would not encourage any man with prostate cancer to start taking Pomi-T food supplements on the basis of this research". Nevertheless it’s promoted on Cancernet.co.uk and widely sold. The Pomi-T site boasts about the (inconclusive) trial, but says "Pomi-T® is not a medicinal product".

There was a cookery demonstration by Dale Pinnock "The medicinal chef" The programme does not tell us whether he made is signature dish "the Famous Flu Fighting Soup". Needless to say, there isn’t the slightest reason to believe that his soup has the slightest effect on flu.

In summary, the whole meeting was devoted to exaggerating vastly the effect of particular foods. It also acted as advertising for people with something to sell. Much of it was outright quackery, with a leavening of more respectable people, a standard part of the bait-and-switch methods used by all quacks in their attempts to make themselves sound respectable. I find it impossible to tell how much the participants actually believe what they say, and how much it’s a simple commercial drive.

The thing that really worries me is why someone like Phil Hammond supports this sort of thing by chairing their meetings (as he did for the "College of Medicine’s" direct predecessor, the Prince’s Foundation for Integrated Health. His defence of the NHS has made him something of a hero to me. He assured me that he’d asked people to stick to evidence. In that he clearly failed. I guess they must pay well.

Follow-up

In the course of thinking about metrics, I keep coming across cases of over-promoted research. An early case was “Why honey isn’t a wonder cough cure: more academic spin“. More recently, I noticed these examples.

“Effect of Vitamin E and Memantine on Functional Decline in Alzheimer Disease".(Spoiler -very little), published in the Journal of the American Medical Association. ”

and ” Primary Prevention of Cardiovascular Disease with a Mediterranean Diet” , in the New England Journal of Medicine (which had second highest altmetric score in 2013)

and "Sleep Drives Metabolite Clearance from the Adult Brain", published in Science

In all these cases, misleading press releases were issued by the journals themselves and by the universities. These were copied out by hard-pressed journalists and made headlines that were certainly not merited by the work. In the last three cases, hyped up tweets came from the journals. The responsibility for this hype must eventually rest with the authors. The last two papers came second and fourth in the list of highest altmetric scores for 2013

Here are to two more very recent examples. It seems that every time I check a highly tweeted paper, it turns out that it is very second rate. Both papers involve fMRI imaging, and since the infamous dead salmon paper, I’ve been a bit sceptical about them. But that is irrelevant to what follows.

Boost your memory with electricity

That was a popular headline at the end of August. It referred to a paper in Science magazine:

“Targeted enhancement of cortical-hippocampal brain networks and associative memory” (Wang, JX et al, Science, 29 August, 2014)

This study was promoted by the Northwestern University "Electric current to brain boosts memory". And Science tweeted along the same lines.

|

Science‘s link did not lead to the paper, but rather to a puff piece, "Rebooting memory with magnets". Again all the emphasis was on memory, with the usual entirely speculative stuff about helping Alzheimer’s disease. But the paper itself was behind Science‘s paywall. You couldn’t read it unless your employer subscribed to Science.

|

|

|

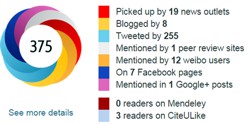

All the publicity led to much retweeting and a big altmetrics score. Given that the paper was not open access, it’s likely that most of the retweeters had not actually read the paper. |

|

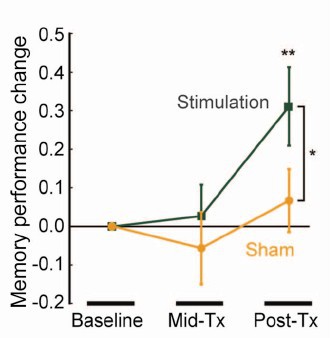

When you read the paper, you found that is mostly not about memory at all. It was mostly about fMRI. In fact the only reference to memory was in a subsection of Figure 4. This is the evidence.

That looks desperately unconvincing to me. The test of significance gives P = 0.043. In an underpowered study like this, the chance of this being a false discovery is probably at least 50%. A result like this means, at most, "worth another look". It does not begin to justify all the hype that surrounded the paper. The journal, the university’s PR department, and ultimately the authors, must bear the responsibility for the unjustified claims.

Science does not allow online comments following the paper, but there are now plenty of sites that do. NHS Choices did a fairly good job of putting the paper into perspective, though they failed to notice the statistical weakness. A commenter on PubPeer noted that Science had recently announced that it would tighten statistical standards. In this case, they failed. The age of post-publication peer review is already reaching maturity

Boost your memory with cocoa

Another glamour journal, Nature Neuroscience, hit the headlines on October 26, 2014, in a paper that was publicised in a Nature podcast and a rather uninformative press release.

"Enhancing dentate gyrus function with dietary flavanols improves cognition in older adults. Brickman et al., Nat Neurosci. 2014. doi: 10.1038/nn.3850.".

The journal helpfully lists no fewer that 89 news items related to this study. Mostly they were something like “Drinking cocoa could improve your memory” (Kat Lay, in The Times). Only a handful of the 89 reports spotted the many problems.

A puff piece from Columbia University’s PR department quoted the senior author, Dr Small, making the dramatic claim that

“If a participant had the memory of a typical 60-year-old at the beginning of the study, after three months that person on average had the memory of a typical 30- or 40-year-old.”

|

Like anything to do with diet, the paper immediately got circulated on Twitter. No doubt most of the people who retweeted the message had not read the (paywalled) paper. The links almost all led to inaccurate press accounts, not to the paper itself. |

|

But some people actually read the paywalled paper and post-publication review soon kicked in. Pubmed Commons is a good site for that, because Pubmed is where a lot of people go for references. Hilda Bastian kicked off the comments there (her comment was picked out by Retraction Watch). Her conclusion was this.

"It’s good to see claims about dietary supplements tested. However, the results here rely on a chain of yet-to-be-validated assumptions that are still weakly supported at each point. In my opinion, the immodest title of this paper is not supported by its contents."

(Hilda Bastian runs the Statistically Funny blog -“The comedic possibilities of clinical epidemiology are known to be limitless”, and also a Scientific American blog about risk, Absolutely Maybe.)

NHS Choices spotted most of the problems too, in "A mug of cocoa is not a cure for memory problems". And so did Ian Musgrave of the University of Adelaide who wrote "Most Disappointing Headline Ever (No, Chocolate Will Not Improve Your Memory)",

Here are some of the many problems.

- The paper was not about cocoa. Drinks containing 900 mg cocoa flavanols (as much as in about 25 chocolate bars) and 138 mg of (−)-epicatechin were compared with much lower amounts of these compounds

- The abstract, all that most people could read, said that subjects were given "high or low cocoa–containing diet for 3 months". Bit it wasn’t a test of cocoa: it was a test of a dietary "supplement".

- The sample was small (37ppeople altogether, split between four groups), and therefore under-powered for detection of the small effect that was expected (and observed)

- The authors declared the result to be "significant" but you had to hunt through the paper to discover that this meant P = 0.04 (hint -it’s 6 lines above Table 1). That means that there is around a 50% chance that it’s a false discovery.

- The test was short -only three months

- The test didn’t measure memory anyway. It measured reaction speed, They did test memory retention too, and there was no detectable improvement. This was not mentioned in the abstract, Neither was the fact that exercise had no detectable effect.

- The study was funded by the Mars bar company. They, like many others, are clearly looking for a niche in the huge "supplement" market,

The claims by the senior author, in a Columbia promotional video that the drink produced "an improvement in memory" and "an improvement in memory performance by two or three decades" seem to have a very thin basis indeed. As has the statement that "we don’t need a pharmaceutical agent" to ameliorate a natural process (aging). High doses of supplements are pharmaceutical agents.

To be fair, the senior author did say, in the Columbia press release, that "the findings need to be replicated in a larger study—which he and his team plan to do". But there is no hint of this in the paper itself, or in the title of the press release "Dietary Flavanols Reverse Age-Related Memory Decline". The time for all the publicity is surely after a well-powered study, not before it.

The high altmetrics score for this paper is yet another blow to the reputation of altmetrics.

One may well ask why Nature Neuroscience and the Columbia press office allowed such extravagant claims to be made on such a flimsy basis.

What’s going wrong?

These two papers have much in common. Elaborate imaging studies are accompanied by poor functional tests. All the hype focusses on the latter. These led me to the speculation ( In Pubmed Commons) that what actually happens is as follows.

- Authors do big imaging (fMRI) study.

- Glamour journal says coloured blobs are no longer enough and refuses to publish without functional information.

- Authors tag on a small human study.

- Paper gets published.

- Hyped up press releases issued that refer mostly to the add on.

- Journal and authors are happy.

- But science is not advanced.

It’s no wonder that Dorothy Bishop wrote "High-impact journals: where newsworthiness trumps methodology".

It’s time we forgot glamour journals. Publish open access on the web with open comments. Post-publication peer review is working

But boycott commercial publishers who charge large amounts for open access. It shouldn’t cost more than about £200, and more and more are essentially free (my latest will appear shortly in Royal Society Open Science).

Follow-up

Hilda Bastian has an excellent post about the dangers of reading only the abstract "Science in the Abstract: Don’t Judge a Study by its Cover"

4 November 2014

I was upbraided on Twitter by Euan Adie, founder of Almetric.com, because I didn’t click through the altmetric symbol to look at the citations "shouldn’t have to tell you to look at the underlying data David" and "you could have saved a lot of Google time". But when I did do that, all I found was a list of media reports and blogs -pretty much the same as Nature Neuroscience provides itself.

More interesting, I found that my blog wasn’t listed and neither was PubMed Commons. When I asked why, I was told "needs to regularly cite primary research. PubMed, PMC or repository links”. But this paper is behind a paywall. So I provide (possibly illegally) a copy of it, so anyone can verify my comments. The result is that altmetric’s dumb algorithms ignore it. In order to get counted you have to provide links that lead nowhere.

So here’s a link to the abstract (only) in Pubmed for the Science paper http://www.ncbi.nlm.nih.gov/pubmed/25170153 and here’s the link for the Nature Neuroscience paper http://www.ncbi.nlm.nih.gov/pubmed/25344629

It seems that altmetrics doesn’t even do the job that it claims to do very efficiently.

It worked. By later in the day, this blog was listed in both Nature‘s metrics section and by altmetrics. com. But comments on Pubmed Commons were still missing, That’s bad because it’s an excellent place for post-publications peer review.

This discussion seemed to be of sufficient general interest that we submitted is as a feature to eLife, because this journal is one of the best steps into the future of scientific publishing. Sadly the features editor thought that " too much of the article is taken up with detailed criticisms of research papers from NEJM and Science that appeared in the altmetrics top 100 for 2013; while many of these criticisms seems valid, the Features section of eLife is not the venue where they should be published". That’s pretty typical of what most journals would say. It is that sort of attitude that stifles criticism, and that is part of the problem. We should be encouraging post-publication peer review, not suppressing it. Luckily, thanks to the web, we are now much less constrained by journal editors than we used to be.

Here it is.

Scientists don’t count: why you should ignore altmetrics and other bibliometric nightmares

David Colquhoun1 and Andrew Plested2

1 University College London, Gower Street, London WC1E 6BT

2 Leibniz-Institut für Molekulare Pharmakologie (FMP) & Cluster of Excellence NeuroCure, Charité Universitätsmedizin,Timoféeff-Ressowsky-Haus, Robert-Rössle-Str. 10, 13125 Berlin Germany.

Jeffrey Beall is librarian at Auraria Library, University of Colorado Denver. Although not a scientist himself, he, more than anyone, has done science a great service by listing the predatory journals that have sprung up in the wake of pressure for open access. In August 2012 he published “Article-Level Metrics: An Ill-Conceived and Meretricious Idea. At first reading that criticism seemed a bit strong. On mature consideration, it understates the potential that bibliometrics, altmetrics especially, have to undermine both science and scientists.

Altmetrics is the latest buzzword in the vocabulary of bibliometricians. It attempts to measure the “impact” of a piece of research by counting the number of times that it’s mentioned in tweets, Facebook pages, blogs, YouTube and news media. That sounds childish, and it is. Twitter is an excellent tool for journalism. It’s good for debunking bad science, and for spreading links, but too brief for serious discussions. It’s rarely useful for real science.

Surveys suggest that the great majority of scientists do not use twitter (7 — 13%). Scientific works get tweeted about mostly because they have titles that contain buzzwords, not because they represent great science.

What and who is Altmetrics for?

The aims of altmetrics are ambiguous to the point of dishonesty; they depend on whether the salesperson is talking to a scientist or to a potential buyer of their wares.

At a meeting in London , an employee of altmetric.com said “we measure online attention surrounding journal articles” “we are not measuring quality …” “this whole altmetrics data service was born as a service for publishers”, “it doesn’t matter if you got 1000 tweets . . .all you need is one blog post that indicates that someone got some value from that paper”.

These ideas sound fairly harmless, but in stark contrast, Jason Priem (an author of the altmetrics manifesto) said one advantage of altmetrics is that it’s fast “Speed: months or weeks, not years: faster evaluations for tenure/hiring”. Although conceivably useful for disseminating preliminary results, such speed isn’t important for serious science (the kind that ought to be considered for tenure) which operates on the timescale of years. Priem also says “researchers must ask if altmetrics really reflect impact” . Even he doesn’t know, yet altmetrics services are being sold to universities, before any evaluation of their usefulness has been done, and universities are buying them. The idea that altmetrics scores could be used for hiring is nothing short of terrifying.

The problem with bibliometrics

The mistake made by all bibliometricians is that they fail to consider the content of papers, because they have no desire to understand research. Bibliometrics are for people who aren’t prepared to take the time (or lack the mental capacity) to evaluate research by reading about it, or in the case of software or databases, by using them. The use of surrogate outcomes in clinical trials is rightly condemned. Bibliometrics are all about surrogate outcomes.

If instead we consider the work described in particular papers that most people agree to be important (or that everyone agrees to be bad), it’s immediately obvious that no publication metrics can measure quality. There are some examples in How to get good science (Colquhoun, 2007). It is shown there that at least one Nobel prize winner failed dismally to fulfil arbitrary biblometric productivity criteria of the sort imposed in some universities (another example is in Is Queen Mary University of London trying to commit scientific suicide?).

Schekman (2013) has said that science

“is disfigured by inappropriate incentives. The prevailing structures of personal reputation and career advancement mean the biggest rewards often follow the flashiest work, not the best.”

Bibliometrics reinforce those inappropriate incentives. A few examples will show that altmetrics are one of the silliest metrics so far proposed.

The altmetrics top 100 for 2103

The superficiality of altmetrics is demonstrated beautifully by the list of the 100 papers with the highest altmetric scores in 2013 For a start, 58 of the 100 were behind paywalls, and so unlikely to have been read except (perhaps) by academics.

The second most popular paper (with the enormous altmetric score of 2230) was published in the New England Journal of Medicine. The title was Primary Prevention of Cardiovascular Disease with a Mediterranean Diet. It was promoted (inaccurately) by the journal with the following tweet:

Many of the 2092 tweets related to this article simply gave the title, but inevitably the theme appealed to diet faddists, with plenty of tweets like the following:

The interpretations of the paper promoted by these tweets were mostly desperately inaccurate. Diet studies are anyway notoriously unreliable. As John Ioannidis has said

"Almost every single nutrient imaginable has peer reviewed publications associating it with almost any outcome."

This sad situation comes about partly because most of the data comes from non-randomised cohort studies that tell you nothing about causality, and also because the effects of diet on health seem to be quite small.

The study in question was a randomized controlled trial, so it should be free of the problems of cohort studies. But very few tweeters showed any sign of having read the paper. When you read it you find that the story isn’t so simple. Many of the problems are pointed out in the online comments that follow the paper. Post-publication peer review really can work, but you have to read the paper. The conclusions are pretty conclusively demolished in the comments, such as:

“I’m surrounded by olive groves here in Australia and love the hand-pressed EVOO [extra virgin olive oil], which I can buy at a local produce market BUT this study shows that I won’t live a minute longer, and it won’t prevent a heart attack.”

We found no tweets that mentioned the finding from the paper that the diets had no detectable effect on myocardial infarction, death from cardiovascular causes, or death from any cause. The only difference was in the number of people who had strokes, and that showed a very unimpressive P = 0.04.

Neither did we see any tweets that mentioned the truly impressive list of conflicts of interest of the authors, which ran to an astonishing 419 words.

“Dr. Estruch reports serving on the board of and receiving lecture fees from the Research Foundation on Wine and Nutrition (FIVIN); serving on the boards of the Beer and Health Foundation and the European Foundation for Alcohol Research (ERAB); receiving lecture fees from Cerveceros de España and Sanofi-Aventis; and receiving grant support through his institution from Novartis. Dr. Ros reports serving on the board of and receiving travel support, as well as grant support through his institution, from the California Walnut Commission; serving on the board of the Flora Foundation (Unilever). . . “

And so on, for another 328 words.

The interesting question is how such a paper came to be published in the hugely prestigious New England Journal of Medicine. That it happened is yet another reason to distrust impact factors. It seems to be another sign that glamour journals are more concerned with trendiness than quality.

One sign of that is the fact that the journal’s own tweet misrepresented the work. The irresponsible spin in this initial tweet from the journal started the ball rolling, and after this point, the content of the paper itself became irrelevant. The altmetrics score is utterly disconnected from the science reported in the paper: it more closely reflects wishful thinking and confirmation bias.

The fourth paper in the altmetrics top 100 is an equally instructive example.

|

This work was also published in a glamour journal, Science. The paper claimed that a function of sleep was to “clear metabolic waste from the brain”. It was initially promoted (inaccurately) on Twitter by the publisher of Science. After that, the paper was retweeted many times, presumably because everybody sleeps, and perhaps because the title hinted at the trendy, but fraudulent, idea of “detox”. Many tweets were variants of “The garbage truck that clears metabolic waste from the brain works best when you’re asleep”. |

But this paper was hidden behind Science’s paywall. It’s bordering on irresponsible for journals to promote on social media papers that can’t be read freely. It’s unlikely that anyone outside academia had read it, and therefore few of the tweeters had any idea of the actual content, or the way the research was done. Nevertheless it got “1,479 tweets from 1,355 accounts with an upper bound of 1,110,974 combined followers”. It had the huge Altmetrics score of 1848, the highest altmetric score in October 2013.

Within a couple of days, the story fell out of the news cycle. It was not a bad paper, but neither was it a huge breakthrough. It didn’t show that naturally-produced metabolites were cleared more quickly, just that injected substances were cleared faster when the mice were asleep or anaesthetised. This finding might or might not have physiological consequences for mice.

Worse, the paper also claimed that “Administration of adrenergic antagonists induced an increase in CSF tracer influx, resulting in rates of CSF tracer influx that were more comparable with influx observed during sleep or anesthesia than in the awake state”. Simply put, giving the sleeping mice a drug could reduce the clearance to wakeful levels. But nobody seemed to notice the absurd concentrations of antagonists that were used in these experiments: “adrenergic receptor antagonists (prazosin, atipamezole, and propranolol, each 2 mM) were then slowly infused via the cisterna magna cannula for 15 min”. Use of such high concentrations is asking for non-specific effects. The binding constant (concentration to occupy half the receptors) for prazosin is less than 1 nM, so infusing 2 mM is working at a million times greater than the concentration that should be effective. That’s asking for non-specific effects. Most drugs at this sort of concentration have local anaesthetic effects, so perhaps it isn’t surprising that the effects resembled those of ketamine.

The altmetrics editor hadn’t noticed the problems and none of them featured in the online buzz. That’s partly because to find it out you had to read the paper (the antagonist concentrations were hidden in the legend of Figure 4), and partly because you needed to know the binding constant for prazosin to see this warning sign.

The lesson, as usual, is that if you want to know about the quality of a paper, you have to read it. Commenting on a paper without knowing anything of its content is liable to make you look like an jackass.

A tale of two papers

Another approach that looks at individual papers is to compare some of one’s own papers. Sadly, UCL shows altmetric scores on each of your own papers. Mostly they are question marks, because nothing published before 2011 is scored. But two recent papers make an interesting contrast. One is from DC’s side interest in quackery, one was real science. The former has an altmetric score of 169, the latter has an altmetric score of 2.

|

The first paper was “Acupuncture is a theatrical placebo”, which was published as an invited editorial in Anesthesia and Analgesia [download pdf]. The paper was scientifically trivial. It took perhaps a week to write. Nevertheless, it got promoted it on twitter, because anything to do with alternative medicine is interesting to the public. It got quite a lot of retweets. And the resulting altmetric score of 169 put it in the top 1% of all articles altmetric have tracked, and the second highest ever for Anesthesia and Analgesia. As well as the journal’s own website, the article was also posted on the DCScience.net blog (May 30, 2013) where it soon became the most viewed page ever (24,468 views as of 23 November 2013), something that altmetrics does not seem to take into account. |

|

Compare this with the fate of some real, but rather technical, science.

|

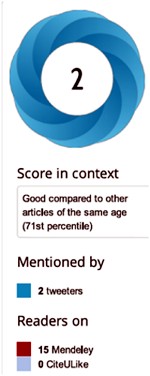

My [DC] best scientific papers are too old (i.e. before 2011) to have an altmetrics score, but my best score for any scientific paper is 2. This score was for Colquhoun & Lape (2012) “Allosteric coupling in ligand-gated ion channels”. It was a commentary with some original material. The altmetric score was based on two tweets and 15 readers on Mendeley. The two tweets consisted of one from me (“Real science; The meaning of allosteric conformation changes http://t.co/zZeNtLdU ”). The only other tweet as abusive one from a cyberstalker who was upset at having been refused a job years ago. Incredibly, this modest achievement got it rated “Good compared to other articles of the same age (71st percentile)”. |

|

Conclusions about bibliometrics

Bibliometricians spend much time correlating one surrogate outcome with another, from which they learn little. What they don’t do is take the time to examine individual papers. Doing that makes it obvious that most metrics, and especially altmetrics, are indeed an ill-conceived and meretricious idea. Universities should know better than to subscribe to them.

Although altmetrics may be the silliest bibliometric idea yet, much this criticism applies equally to all such metrics. Even the most plausible metric, counting citations, is easily shown to be nonsense by simply considering individual papers. All you have to do is choose some papers that are universally agreed to be good, and some that are bad, and see how metrics fail to distinguish between them. This is something that bibliometricians fail to do (perhaps because they don’t know enough science to tell which is which). Some examples are given by Colquhoun (2007) (more complete version at dcscience.net).

Eugene Garfield, who started the metrics mania with the journal impact factor (JIF), was clear that it was not suitable as a measure of the worth of individuals. He has been ignored and the JIF has come to dominate the lives of researchers, despite decades of evidence of the harm it does (e.g.Seglen (1997) and Colquhoun (2003) ) In the wake of JIF, young, bright people have been encouraged to develop yet more spurious metrics (of which ‘altmetrics’ is the latest). It doesn’t matter much whether these metrics are based on nonsense (like counting hashtags) or rely on counting links or comments on a journal website. They won’t (and can’t) indicate what is important about a piece of research- its quality.

People say – I can’t be a polymath. Well, then don’t try to be. You don’t have to have an opinion on things that you don’t understand. The number of people who really do have to have an overview, of the kind that altmetrics might purport to give, those who have to make funding decisions about work that they are not intimately familiar with, is quite small. Chances are, you are not one of them. We review plenty of papers and grants. But it’s not credible to accept assignments outside of your field, and then rely on metrics to assess the quality of the scientific work or the proposal.

It’s perfectly reasonable to give credit for all forms of research outputs, not only papers. That doesn’t need metrics. It’s nonsense to suggest that altmetrics are needed because research outputs are not already valued in grant and job applications. If you write a grant for almost any agency, you can put your CV. If you have a non-publication based output, you can always include it. Metrics are not needed. If you write software, get the numbers of downloads. Software normally garners citations anyway if it’s of any use to the greater community.

When AP recently wrote a criticism of Heather Piwowar’s altmetrics note in Nature, one correspondent wrote: "I haven’t read the piece [by HP] but I’m sure you are mischaracterising it". This attitude summarizes the too-long-didn’t-read (TLDR) culture that is increasingly becoming accepted amongst scientists, and which the comparisons above show is a central component of altmetrics.

Altmetrics are numbers generated by people who don’t understand research, for people who don’t understand research. People who read papers and understand research just don’t need them and should shun them.

But all bibliometrics give cause for concern, beyond their lack of utility. They do active harm to science. They encourage “gaming” (a euphemism for cheating). They encourage short-term eye-catching research of questionable quality and reproducibility. They encourage guest authorships: that is, they encourage people to claim credit for work which isn’t theirs. At worst, they encourage fraud.

No doubt metrics have played some part in the crisis of irreproducibility that has engulfed some fields, particularly experimental psychology, genomics and cancer research. Underpowered studies with a high false-positive rate may get you promoted, but tend to mislead both other scientists and the public (who in general pay for the work). The waste of public money that must result from following up badly done work that can’t be reproduced but that was published for the sake of “getting something out” has not been quantified, but must be considered to the detriment of bibliometrics, and sadly overcomes any advantages from rapid dissemination. Yet universities continue to pay publishers to provide these measures, which do nothing but harm. And the general public has noticed.

It’s now eight years since the New York Times brought to the attention of the public that some scientists engage in puffery, cheating and even fraud.

Overblown press releases written by journals, with connivance of university PR wonks and with the connivance of the authors, sometimes go viral on social media (and so score well on altmetrics). Yet another example, from Journal of the American Medical Association involved an overblown press release from the Journal about a trial that allegedly showed a benefit of high doses of Vitamin E for Alzheimer’s disease.

This sort of puffery harms patients and harms science itself.

We can’t go on like this.

What should be done?

Post publication peer review is now happening, in comments on published papers and through sites like PubPeer, where it is already clear that anonymous peer review can work really well. New journals like eLife have open comments after each paper, though authors do not seem to have yet got into the habit of using them constructively. They will.

It’s very obvious that too many papers are being published, and that anything, however bad, can be published in a journal that claims to be peer reviewed . To a large extent this is just another example of the harm done to science by metrics –the publish or perish culture.

Attempts to regulate science by setting “productivity targets” is doomed to do as much harm to science as it has in the National Health Service in the UK. This has been known to economists for a long time, under the name of Goodhart’s law.

Here are some ideas about how we could restore the confidence of both scientists and of the public in the integrity of published work.

- Nature, Science, and other vanity journals should become news magazines only. Their glamour value distorts science and encourages dishonesty.

- Print journals are overpriced and outdated. They are no longer needed. Publishing on the web is cheap, and it allows open access and post-publication peer review. Every paper should be followed by an open comments section, with anonymity allowed. The old publishers should go the same way as the handloom weavers. Their time has passed.

- Web publication allows proper explanation of methods, without the page, word and figure limits that distort papers in vanity journals. This would also make it very easy to publish negative work, thus reducing publication bias, a major problem (not least for clinical trials)

- Publish or perish has proved counterproductive. It seems just as likely that better science will result without any performance management at all. All that’s needed is peer review of grant applications.

- Providing more small grants rather than fewer big ones should help to reduce the pressure to publish which distorts the literature. The ‘celebrity scientist’, running a huge group funded by giant grants has not worked well. It’s led to poor mentoring, and, at worst, fraud. Of course huge groups sometimes produce good work, but too often at the price of exploitation of junior scientists

- There is a good case for limiting the number of original papers that an individual can publish per year, and/or total funding. Fewer but more complete and considered papers would benefit everyone, and counteract the flood of literature that has led to superficiality.

- Everyone should read, learn and inwardly digest Peter Lawrence’s The Mismeasurement of Science.

A focus on speed and brevity (cited as major advantages of altmetrics) will help no-one in the end. And a focus on creating and curating new metrics will simply skew science in yet another unsatisfactory way, and rob scientists of the time they need to do their real job: generate new knowledge.

It has been said

“Creation is sloppy; discovery is messy; exploration is dangerous. What’s a manager to do?

The answer in general is to encourage curiosity and accept failure. Lots of failure.”

And, one might add, forget metrics. All of them.

Follow-up

17 Jan 2014

This piece was noticed by the Economist. Their ‘Writing worth reading‘ section said

"Why you should ignore altmetrics (David Colquhoun) Altmetrics attempt to rank scientific papers by their popularity on social media. David Colquohoun [sic] argues that they are “for people who aren’t prepared to take the time (or lack the mental capacity) to evaluate research by reading about it.”"

20 January 2014.

Jason Priem, of ImpactStory, has responded to this article on his own blog. In Altmetrics: A Bibliographic Nightmare? he seems to back off a lot from his earlier claim (cited above) that altmetrics are useful for making decisions about hiring or tenure. Our response is on his blog.

20 January 2014.

Jason Priem, of ImpactStory, has responded to this article on his own blog, In Altmetrics: A bibliographic Nightmare? he seems to back off a lot from his earlier claim (cited above) that altmetrics are useful for making decisions about hiring or tenure. Our response is on his blog.

23 January 2014

The Scholarly Kitchen blog carried another paean to metrics, A vigorous discussion followed. The general line that I’ve followed in this discussion, and those mentioned below, is that bibliometricians won’t qualify as scientists until they test their methods, i.e. show that they predict something useful. In order to do that, they’ll have to consider individual papers (as we do above). At present, articles by bibliometricians consist largely of hubris, with little emphasis on the potential to cause corruption. They remind me of articles by homeopaths: their aim is to sell a product (sometimes for cash, but mainly to promote the authors’ usefulness).

It’s noticeable that all of the pro-metrics articles cited here have been written by bibliometricians. None have been written by scientists.

28 January 2014.

Dalmeet Singh Chawla,a bibliometrician from Imperial College London, wrote a blog on the topic. (Imperial, at least in its Medicine department, is notorious for abuse of metrics.)

29 January 2014 Arran Frood wrote a sensible article about the metrics row in Euroscientist.

2 February 2014 Paul Groth (a co-author of the Altmetrics Manifesto) posted more hubristic stuff about altmetrics on Slideshare. A vigorous discussion followed.

5 May 2014. Another vigorous discussion on ImpactStory blog, this time with Stacy Konkiel. She’s another non-scientist trying to tell scientists what to do. The evidence that she produced for the usefulness of altmetrics seemed pathetic to me.

7 May 2014 A much-shortened version of this post appeared in the British Medical Journal (BMJ blogs)

Here is a record of a couple of recent newspaper pieces. Who says the mainstream media don’t matter any longer? Blogs may be in the lead now when it comes to critical analysis. The best blogs have more expertise and more time to read the sources than journalists. But the mainstream media get the message to a different, and much larger, audience.

The Observer ran a whole page interview with me as part of their “Rational Heroes” series. I rather liked their subtitle [pdf of article]

“Professor of pharmacology David Colquhoun is the take-no-prisoners debunker of pseudoscience on his unmissable blog”

It was pretty accurate apart from the fact that the picture was labelled as “DC in his office”. Actually it was taken (at the insistence of the photographer) in Lucia Sivilotti’s lab.

Photo by Karen Robinson.

The astonishing result of this was that on Sunday the blog got a record 24,305 hits. Normally it gets 1,000-1,400 hits a day . between posts, fewer on Sunday, and the previous record was around 7000/day

A week later it was still twice normal. It remains to be seen whether the eventual plateau stays up.

I also gained around 1000 extra followers on twitter, though some dropped away quite soon, and 100 or so people signed for email updates. The dead tree media aren’t yet dead. I’m happy to say.

3 June 2013

Perhaps as a result of the foregoing piece, I got asked to write a column for The Observer, at barely 48 hours notice. This is the best I could manage in the time. The web version has links.

This attracted the usual "it worked for me" anecdotes in the comments, but I spent an afternoon answering them. It seems important to have a dialogue, not just to lecture the public. In fact when I read a regular scientific paper, I now find myself looking for the comment section. That may say something about the future of scientific publishing.

It is for others to judge how succesfully I engage with the public, but I was quite surprised to discover that UCL’s public engagement unit, @UCL_in_public, has blocked me on twitter. Hey ho. They have 1574 follower and I have 7597. I wish them the best of luck.

Follow-up

|

I had never intended to write about climate. It is too far from the things I know about. But recent events have unleashed a Palin-esque torrent of comments from people who clearly know even less about it than I do. In any case, it provides a good context to think about trust in science, |

My interest in it, apart from little matters like the future of the planet, lies in the reputation of science and scientists.

I have been going on for years now about the lack of trust in science, and the extent to which it is a self-inflicted problem. The latest reactions to the developments at the University of East Anglia and the IPCC may show the nature of the problem with dreadful clarity,

Many of us came into science because, apart from the sheer beauty of nature, it seemed like one of the few honest ways of earning a living. Most scientists that I know still think like that, but recent

events invite some reexamination of honesty in science.

How dishonest is science?

The first thing to say is that I have never come across anything in my own field that would qualify as fraud, or even dishonest. I did once have a visit from a rather distressed postdoc (not in my area of work) who felt pressurised by her boss into putting an interpretation on her work that she did not agree with. In the end, the bit of work in question was left out of the paper. That could be held to be dishonest, in that the omission wasn’t mentioned, but it could also be held that the omitted result was too ambiguous to contribute much to the paper. It was just short of the point where I’d have felt compelled to do something about it. But only just. That is about the worst thing I’ve encountered in a lifetime.

There is, of course, an enormous difference between being wrong and being dishonest. Any research that is worth doing has an outcome that can’t be predicted before the work is done. At best, one can hope for an approximation to the truth. Mistakes in observations, analysis or interpretation will sometimmes mean the announced result is completely wrong, with no trace of dishonesty being involved. But when that happens, others soon fiind the mistake. It is that self-correcting characteristic of science that keeps it honest in the long run.

Of course there have been occasional cases of outright fraud, simple

falsification or fabrication of data. How often it occurs is not really known. There is a recent analysis in PLoS One, about verified cases of misconduct in the USA suggested that 1 in 100,000 scientists per year are to blame, but other ways of counting give larger numbers. For example, if asked around 2 in 100 scientists claim to be aware of misconduct by someone else., The numbers aren’t huge but they are much bigger than they should be.

It isn’t perhaps surprising that the Fanelli study found misconduct was most frequent in “medical (including clinical and pharmacological) research studies”, which are often funded by the pharmaceutical industry, Basic biomedical research and other subjects were better, though sadly that could be only because they are less often offered money.

What gives rise to dishonesty?

It seems obvious that one motive is money, as suggested by the worst rates of misconduct being found in the clinical pharmacological studies, It is well known that studies funded by industry are more likely to produce results that favour the product than those funded in other ways.

The other reason is presumably the human desire to win fame, promotion and to get grants.

It is no excuse, but it is perhaps a reason for misconduct that the pressure to publish and produce results is now enormous in academia. Even in good universities people are judged by the numbers (rather than the quality) of papers they produce and by what journal they happen to be published in. Contrary to public perception, even quite senior people have no guarantee that they can’t be fired, and life for postdoctoral fellows, who do a large fraction of experimental research, is harsh to the point of cruelty. They exist on a series of short term contracts, they work exceedigly hard and have poor prospects of getting a secure job. In conditions like that, the only surprising thing is that there is so little dishonesty.

The pressure to publish in particular journals is particularly invidious because it is known that the number of citations that a paper gets (itself a fallible measure of quality) is independent of the journal in which it appears. Bibliometrists are the curse of our age. (See, for example Challenging the tyranny of impact factors, 2003; and How to get good science, 2007 or its web version; and Peter Lawrence’s article, The mismeasurement of science)

The enormous competitive pressure under which academics work is imposed by vice-chancellors, research councils and other senior people who should know better, It is a self-inflicted wound.

In other words, the authorities provide a strong incentive to do poor, over-hurried and occasionally dishonest science. Perhaps the surprising thing in the circumstances is that there is so little outright fabrication. The very measures that have the aim of improving science actually have just the opposite effect. That is what happens when science is run by people who don’t do it.

For an idea of what life is like in science now, try Peter Lawrence’s Real Lives and White Lies in the Funding of Scientific Research. Or, for someone at the other end of their career, Jennifer Rohn’s account on Nature blogs.

Given the high degree of insecurity for young researchers, compounded by well-intentioned but vacuous “training” from daft Robert’s’ "training courses", or the dismaly ineffective Concordat, the only surprise is that so many people remain honest and devoted to good science. Nothing raises the ire of hard-pressed scientists more than the constant emails form HR trying to force people to go to gobbledygook courses on "wellbeing". Times Higher Education recently did a piece on "Get happy", The comments are worth reading.

So what about climate change?

Out of thousands of pages in the IPCC reports, a single mistake was found, On page 493 of the IPCC’s second 1000-page Working Group report on “Impacts, Adaptation and Vulnerability” (WGII) it was said that Himalayan glaciers were “very likely” to disappear by 2035. Glaciers are melting but that date can’t be justified. This single mistake has been blown out of all proportion. Furthermore it is important to notice that the mistake was found by scientists, not by ‘sceptics’. It is a good example of the self-correcting nature of science. Nevertheless this single mistake has provoked something close to hysteria among those who want to deny that something needs to be done.

On the other hand, the hacked emails from the University of East Anglia (UEA) look bad. It simply isn’t possible at the moment to say whether they are as bad as they seem at first sight, We just don’t know whether anything of importance was concealed, but we should know.

One thing can be said with certainty, and that is that the reaction to their revelation by Dr Phil Jones, and by the vice-chancellor of the University of East Anglia, was nothing short of disastrous. Fred Pearce put it very well in Climate emails cannot destroy proof that humans are warming the planet

Most unforgivably of all, UEA refused to comply with requests under the Freedom of Information Act, and there is some reason to think that relevant material was deleted. The deputy information commissioner, Graham Smith, said: in a statement that

“The emails which are now public reveal that Mr Holland’s requests under the Freedom of Information Act were not dealt with as they should have been under the legislation. Section 77 of the Freedom of Information Act makes it an offence for public authorities to act so as to prevent intentionally the disclosure of requested information.”.

That seems to me to be a matter that requires the resignation of the vice-chancellor. On this matter, I think George Monbiot is spot on in his article “Climate change email scandal shames the university and requires resignations“.

There was a big feature about academic freedom in Times Higher Education recently. One of the problems was what happens to someone who brings their own university into disrepute. But when that term is used, it is always used about junior partners in the organisation (you know, professors and the like). It should apply equally to heads of communications and vice-chancellors who bring their own university into disrepute, whether the disrepute is brought about by failing to comply with the Freedom of Information Act, or by promoting courses in junk medicine.

In general, conspiracy theories are wrong. I’m not sure how much of the distortion of climate data results from surreptitious funding of opposition to doing anything by the fossil fuel industry. The Royal Society is an organisation that is not usually prone to conspiritorialist views. That means one must take it seriously the fact that in 2006, the Royal Society wrote to ExxonnMobil to ask them to stop funding climate denialist organisations. This is a bit like the way Big Pharma has been caught funding “user groups” that endorse their products. Some newspapers like to stir up controversies about things that aren’t very controversial. For example there is a good analysis of a recent Sunday Times piece here.

Of course it is often alleged that "quackbusters" are funded by Big Pharma, though in fact the amounts of money involved are far too small for Big Pharma to bother. Climate deniers too like to suggest that there is some sort of conspiracy, arranged between hundreds of labs in the world to conceal the fact that there is no such thing as warming. I guess that shows only that deniers know little about how science works. it is an exceedingly competitive business, and getting hundreds of labs to say the same thing would be like trying to herd cats.

If there is a problem, it is the other way round. Labs are in such intense competition with each other, that it lcan lead to undesirable levels of secrecy.

Blogs in which researches have a direct dialogue with the public are a big help. As always in the blogosphere, the problem is to find the reliable sources. Two excellent sites, in which scientists (not journalists or lobbyists) talk directly with the public are realclimate.org and Andrew Russell’s blog. The post on RealClimate, IPCC errors: facts and spin, is especially worth reading.

Total openness is the only cure

All the raw data and all emails have to be disclosed openly. Everything should be put on the web as soon as possible. By appearing to go to ground, UEA has made enormous problems for itself and for the rest of the world. Some people object to total openness on the grounds that the other side tells lies. In the case of climate change (and in the case of junk medicine too) that is undoubtedly true. The opponents are ruthlessly dishonest about facts. The only way to counter that is by being ruthlessly and visibly honest about what you know, and why.

The UK’s Meteorological Office has, to its great credit, put raw data on line. That policy has already paid off, because a science blogger found a mistake in the way that some Australian data had been incorporated into forecasts. The Met Office thanked him and corrected the mistake. In fact the error makes no substantial difference to the warming trend, but the principle is just great. The more people who can check analyses and eliminate slip-ups the better.

Putting raw data on the web is an idea that has been gathering force for a while, in all areas of work, not just climate change. In my own are (stochastic properties of single ion channel molecules) our analysis programs have always been available on the web, free to anyone who wants them, despite the large amount of work that has gone into them. And we run a course. almost free, on the theory that underlies our analyses. Within the last couple of months we have been discussion ways of making public all our raw data (in any case, we would always have sent it to anyone who asked). Digitised single channel records are big files (around 100 Mb) and it is only recently that the web has been able to deal with such large amounts of raw data. There are also problems of how to format data so other people can read it, The way we are all heading is clear, and the fact that some people in climate science appeared to be hiding raw data is a disgrace.

Public relations is not the cure

,

It is not uncommon to read that science needs better PR. That is precisely what is not needed. PR exists to put only one side of the story. That makes it an essentially dishonest occupation. Its aims are the very opposite of those of science. The public aren’t stupid: often they recognise when they are getting half the story.

It is particularly unfortunate that many universities have developed departments with names like "corporate communications". Externally they are seen as giving information about science, and indeed some of the things they do are successful public engagement in science. Only too often, though, it is made clear internally that an important aim of these departments is to improve the image of the university.

But you have to choose. You can engage the public in science or you can be a PR image-builder. You can’t be both.

The matter came to a head in 2008 when, according to a report in Times Higher Education, the University of Nottingham issued a memo that defined public engagement as: “The range of activities of which the primary functions are to raise awareness of the university’s capabilities, expertise and profile to those not already engaged with the institution”.

The mainstream media and political blogs

The biggest problem of all with climate change is that it has become more about politics than about facts. It has become an essential credential for any conservative to deny that climate is changing. It is part of their public image, and most conservatives neither know nor care about evidence. Like Sarah Palin, they just know. In the USA especially, the argument is not really about climate at all. It is really about discrediting Barack Obama -a sort of swift-boat treatment that uses whatever lies are needed.

Just as with the great MMR fiasco and the promotion of its false link to autism, reports in newspapers and blogs must bear much of the blame for failing to inform readers of the actual underlying facts and, just as important, the uncertainties. Of course some papers have done a pretty good job, particularly the Guardian and the Independent in the UK, and the New York Times.. The political blogs, by and large, haven’t. The Huffington Post has made little effort (and publishes some appalling nonsense about medicine too).

The problem with political blogs and tabloid newspapers is that they are much more interested in sensation and circulation than they are in giving accurate news and information. Take, for example, the Guido Fawkes blog. To be fair, the blog itself says "The primary motivation for the creation of the blog was purely to make mischief at the expense of politicians and for the author’s own self-gratification. Its writer", so you know not to expect much, Paul Staines, was at the Westminster Skeptics event, Does Political Blogging Make a Difference? He makes no pretence of taking the news seriously, which, I guess, is why I don’t read his blog. After the talks I asked why his blog did little about climate change. His answer was "where are your sandals?". On the way home I tweeted, from a very overcrowded train (most trains from Euston being cancelled that night),

"On way home from #sitp political blogging. Learned that Guido serious about nothing but Guido. Narcissist not journalist."

At least one other person there agreed (thanks, Dave Cole).

It was good to hear Sunny Hundall of Liberal Conspiracy (the only one I read), but I found myself agreeing mostly with the chair, Nick Cohen. It would be a tragedy if the great national and local papers were to vanish. Guido Fawkes and Huffington Post are not remotely like proper newspapers.

Specialist blogs like this one are fine if you are interested in the topics we write about, but we don’t begin to supplant proper newspapers. Bloggers can and do occasionally get good stories. Those that are written by scientists can analyse more critically than most journalists have either the knowledge or the time to do. Bur they don’t come close to supplanting the detailed reporting in good newspapers of local events, what happens in law courts or in parliament. That’s why it is vital to buy newspapers, not just read them free on the web.

Follow-up

James Hayton, who is in nanoscience has posted his thoughts obout trust in science on his blog. I discovered this via Twitter (@James_Hayton). He also posted a beautiful clip from the Ascent of Man, in which Jacob Bronowski speaks, from Auschwitz, of the consequences of irrational dogma. I’m old enough to remember Bronowski on a 1950s radio programme, the Brains Trust, though James Hayton clearly isn’t. Now I enjoy equally his daughter, Lisa Jardine‘s talks about science and history.

1 March 2010. Phil Jones, and the vice-chancellor of the University of East Anglia, appeared before a parliamentary committee. I found their responses to questions very disappointing. The evidence submitted by the Institute of Physics was strongly worded, but spot on.

“The CRU e-mails as published on the internet provide prima facie evidence of determined and co-ordinated refusals to comply with honourable scientific traditions and freedom of information law. The principle that scientists should be willing to expose their ideas and results to independent testing and replication by others, which requires the open exchange of data, procedures and materials, is vital.”

7 March 2010. Thanks to some kind remarks from Michael Kenward (see first comment). I sought wider coverage of this item in the mainstream media. Consequently, on Thursday 4 March, a much shortened version of this article appeared on the Guardian environment site. That piece has accumulated so far, 230 comments. The discussion of it has spread to the two blogs that I recommended, Andy Russell’s blog and RealClimate.org, though it has been diverted onto the side-issue of the letter from the Institute of Physics. The seemingly innocent idea that total openness would increase trust has, to my real astonishment, resulted in hysterical accusations that I’m a crypto-denialist. The constant politically-motivated attacks on climate science seem to have induced a paranoid siege mentality in some of them. There is a real danger that such people will harm their own cause, and that would be tragic.

It seems very reasonable to suggest that taxpayers have an interest in knowing what is taught in universities. The recent Pittilo report suggested that degrees should be mandatory in Acupuncture, Herbal Medicine and Traditional Chinese Medicine. So it seems natural to ask to see what is actually taught in these degrees, so one can judge whether it protects the public or endangers them.

Since universities in the UK receive a great deal of public money, it’s easy. Just request the material under the Freedom of Information Act.

Well, uh, it isn’t as simple as that.

Every single application that I have made has been refused. After three years of trying, the Information Commissioner eventually supported my appeal to see teaching materials from the Homeopathy "BSc" at the University of Central Lancashire. He ruled that every single objection (apart from one trivial one) offered by the universities was invalid. In particular, it was ruled that univerities were not "commercial" organisations for the purposes of the Act.

So problem solved? Not a bit of it. I still haven’t seen any of the materials from the original request because the University of Central Lancashire appealed against the decision and the case of University of Central Lancashire v Information Commissioner is due to be heard on November 3rd, 4th and 5th in Manchester. I’m joined (as lawyers say) as a witness. Watch this space.

UCLan is not the exception. It is the rule. I have sought under the Freedom of Information Act, teaching materials from UClan (homeopathy), University of Salford (homeopathy, reflexology and nutritional therapy), University of Westminster (homeopathy, reflexology and nutritional therapy), University of West of England, University of Plymouth and University of East London, University of Wales (chiropractic and nutritional therapy), Robert Gordon University Aberdeen (homeopathy), Napier University Edinburgh (herbalism).

In every single case, the request for teaching materials has been refused. And that includes the last three, which were submitted after the decision of the Information Commissioner. They will send things like course validation documents, but these are utterly uninformative box-ticking documents. They say nothing whatsoever about what is actually taught.

The fact that I have been able to discover quite a lot about what’s being taught owes nothing whatsoever to the Freedom of Information Act. It is due entirely to the many honest individuals who have sent me teaching materials, often anonymously. We should be grateful to them. Their principles are rather more impressive than those of their principals.

Since this started about three years ago, two of the universities, UCLan and Salford, have shut down entry to all of their CAM courses. And Westminster has shut two of them, with more rumoured to be closing soon. They are to be congratulated for that, but is far from being the end of the matter. The Department of Health, and some of the Royal Colleges, have yet to catch up with the universities, The Pittolo report, which recommends making degrees compulsory, is being considered by the Department of Health. The consultation ends on November 2nd: if you haven’t yet responded, please do so now (see how here, and here).

A common excuse: the university does not possess teaching materials (yes, really)

Several of the universities claim that they cannot send teaching materials, because they have no access to them. This happens when the university has accredited a course that is run by another, privately run, institution. The place that does the actual teaching, being private, is exempt from the Freedom of Information Act.

The ludicrous corollary of this excuse is that the university has accredited the course without checking on what is taught, and in some cases without even having seen a timetable.

The University of Wales

In fact the University of Wales doesn’t run courses at all. Like the (near moribund) University of London, it acts as a degree-awarding authority for a lot of Welsh Universities. It also validates a lot of courses in non-university institutions, 34 or so of them in the UK, and others scattered round the world.

Many of them are theological colleges. It does seem a bit odd that St Petersburg Christian University, Russia, and International Baptist Theological Seminary, Prague, should be accredited by the University of Wales.

They also validate the International Academy of Osteopathy, Ghent (Belgium), Osteopathie Schule Deutschland, the Istituto Superiore Di Osteopatia, Milan, the Instituto Superior De Medicinas Tradicionales, Barcelona, the Skandinaviska Osteopathögskolan (SKOS) Gothenburg, Sweden and the College D’Etudes Osteopathiques, Canada.

The 34 UK institutions include the Scottish School of Herbal Medicine, the Northern College of Acupuncture and the Mctimoney College of Chiropractic.

The case of the Nutritional Therapy course has been described already in Another worthless validation: the University of Wales and nutritional therapy. It emerged that the course was run by a grade 1 new-age fantasist. It is worth recapitulating the follow up.

What does the University of Wales say? So far, nothing. Last week I sent brief and polite emails to Professor Palastanga and to

Professor Clement to try to discover whether it is true that the validation process had indeed missed the fact that the course organiser’s writings had been described as “preposterous, made-up, pseudoscientific nonsense” in the Guardian.

So far I have had no reply from the vice-chancellor, but on 26 October I did get an answer from Prof Palastanga.

As regards the two people you asked questions about – J.Young – I personally am not familiar with her book and nobody on the validation panel raised any concerns about it. As for P.Holford similarly there were no concerns expressed about him or his work. In both cases we would have considered their CV’s as presented in the documentation as part of the teaching team. In my experience of conducting degree validations at over 16 UK Universities this is the normal practice of a validation panel.

I have to say this reply confirms my worst fears. Validation committees such as this one simply don’t do their duty. They don’t show the curiosity that is needed to discover the facts about the things that they are meant to be judging. How could they not have looked at the book by the very person that they are validating? After all that has been written about Patrick Holford, it is simply mind-boggling that the committee seems to have been quite unaware of any of it.It is yet another example of the harm done to science by an unthinking, box-ticking approach.

Incidentally, Professor Nigel Palastanga has now been made Pro Vice-Chancellor (Quality) at the University of Wales and publishes bulletins on quality control. Well well.

The McTimoney College of Chiropractic was the subject of my next Freedom of Information request to the University of Wales. The reasons for that are, I guess, obvious. They sent me hundreds of pages of validation documents, Student Handbooks (approx 50 pages), BSc (Hons) Chiropractic Course Document. And so on. Reams of it. The documents mostly are in the range of 40 to 100 pages. Tons of paper, but none of it tells you anyhing whatsover of interest about what’s being taught. They are a testament to the ability of universities to produce endless vacuous prose with

very litlle content.

They did give me enough information to ask for a sample of the teaching materials on particular topics. But I gor blank refusal, on the grounds that they didn’t possess them. Only McTimoney had them. Their (unusually helpful) Freedom of Information officer replied thus.

“The University is entirely clear about the content of the course but the day to day timetabling of teaching sessions is a matter for the institution rather than the University and we do not require or possess timetable information. The Act does not oblige us to request the information but there is no reason you should not approach McTimoney directly on this.”

So the university doesn’t know the timetable. It doesn’t know what is taught in lectures, but it is " entirely clear about the content of the course".

This response can be described only as truly pathetic.

Either this is a laughably crude form of obstruction of my request, or perhaps, even more frighteningly, the university really believes that its endless box-ticking documents actually provide some useful control of quality. Perhaps the latter interpretation is more charitable. After all, the QAA, CHRE, UUK and every HR department share similar delusions about what constitutes quality.

Perhaps it is just yet another consequence of having science run largely by people who have never done it and don’t understand it.

Validation is a business. The University of Wales validates no fewer than 11,675 courses altogether. Many of these are perfectly ordinary courses in universities in Wales, but they validate 594 courses at non-Welsh accredited institutions, an activity that earned them £5,440,765 in the financial year 2007/8. There’s nothing wrong with that if they did the job properly. In the two cases I’ve looked at, they haven’t done the job properly. They have ticked boxes but they have not looked at what’s being taught or who is teaching it.

The University of Kingston

The University of Kingston offers a “BSc (Hons)” in acupuncture. In view of the fact that the Pittilo group has recommended degrees in acupuncture, there is enormous public interest in what is taught in such degrees, so I asked.

They sent the usual boring validation documents and a couple of sample exam papers . The questions were very clinical, and quite beyond the training of acupuncturists. The validation was done by a panel of three, Dr Larry Roberts (Chair, Director of Academic Development, Kingston University), Mr Roger Hill (Accreditation Officer, British Acupuncture Accreditation Board) and Ms Celia Tudor-Evans (Acupuncturist, College of Traditional Acupuncture, Leamington Spa). So nobody with any scientific expertise, and not a word of criticism.

|

Further to your recent request for information I am writing to advise that the University does not hold the following requested information: (1) Lecture handouts/notes and powerpoint presentations for the following sessions, mentioned in Template 3rd year weekend and weekday course v26Aug2009_LRE1.pdf (a) Skills 17: Representational systems + Colour & Sound ex. Tongue feedback 11 (b) Mental Disease + Epilepsy Pulse feedback 21 (c) 18 Auricular Acupuncture (d) Intro. to Guasha + practice Cupping, moxa practice Tongue feedback 14 (2) I cannot see where the students are taught about research methods and statistics. I would like to see Lecture handouts/notes and PowerPoint presentations for teaching in this area, but the ‘timetables’ that you sent don’t make clear when or if it is taught. The BSc Acupuncture is delivered by a partner college, the College of Integrated Chinese Medicine (CICM), with Kingston University providing validation only. As such, the University does not hold copies of the teaching materials used on this course. In order to obtain copies of the teaching materials required you may wish to contact the College of Integrated Chinese Medicine directly. This completes the University’s response to your information request. |