British Journal of General Practice

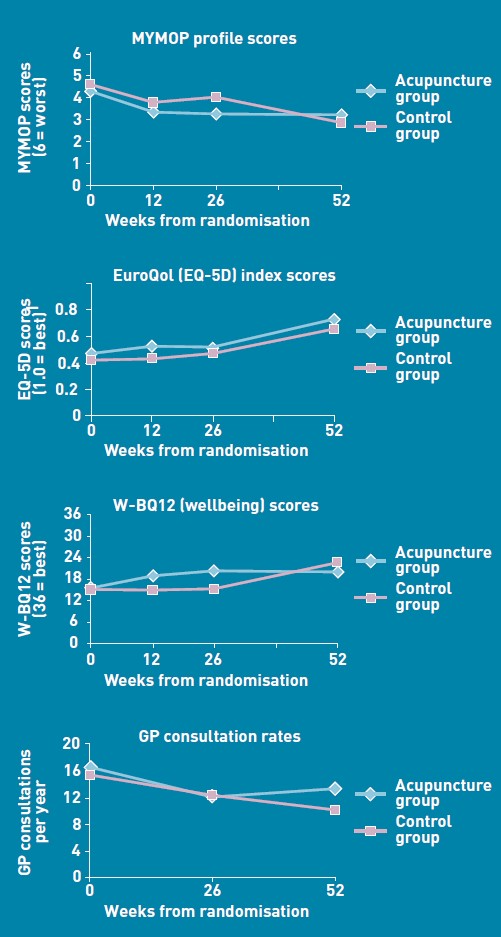

One wonders about the standards of peer review at the British Journal of General Practice. The June issue has a paper, "Acupuncture for ‘frequent attenders’ with medically unexplained symptoms: a randomised controlled trial (CACTUS study)". It has lots of numbers, but the result is very easy to see. Just look at their Figure.

There is no need to wade through all the statistics; it’s perfectly obvious at a glance that acupuncture has at best a tiny and erratic effect on any of the outcomes that were measured.

But this is not what the paper said. On the contrary, the conclusions of the paper said

|

Conclusion The addition of 12 sessions of five-element acupuncture to usual care resulted in improved health status and wellbeing that was sustained for 12 months. |

How on earth did the authors manage to reach a conclusion like that?

The first thing to note is that many of the authors are people who make their living largely from sticking needles in people, or advocating alternative medicine. The authors are Charlotte Paterson, Rod S Taylor, Peter Griffiths, Nicky Britten, Sue Rugg, Jackie Bridges, Bruce McCallum and Gerad Kite, on behalf of the CACTUS study team. The senior author, Gerad Kite MAc , is principal of the London Institute of Five-Element Acupuncture London. The first author, Charlotte Paterson, is a well known advocate of acupuncture. as is Nicky Britten.

The conflicts of interest are obvious, but nonetheless one should welcome a “randomised controlled trial” done by advocates of alternative medicine. In fact the results shown in the Figure are both interesting and useful. They show that acupuncture does not even produce any substantial placebo effect. It’s the authors’ conclusions that are bizarre and partisan. Peer review is indeed a broken process.

That’s really all that needs to be said, but for nerds, here are some more details.

How was the trial done?

The description "randomised" is fair enough, but there were no proper controls and the trial was not blinded. It was what has come to be called a "pragmatic" trial, which means a trial done without proper controls. They are, of course, much loved by alternative therapists because their therapies usually fail in proper trials. It’s much easier to get an (apparently) positive result if you omit the controls. But the fascinating thing about this study is that, despite the deficiencies in design, the result is essentially negative.

The authors themselves spell out the problems.

“Group allocation was known by trial researchers, practitioners, and patients”

So everybody (apart from the statistician) knew what treatment a patient was getting. This is an arrangement that is guaranteed to maximise bias and placebo effects.

"Patients were randomised on a 1:1 basis to receive 12 sessions of acupuncture starting immediately (acupuncture group) or starting in 6 months’ time (control group), with both groups continuing to receive usual care."

So it is impossible to compare acupuncture and control groups at 12 months, contrary to what’s stated in Conclusions.

"Twelve sessions, on average 60 minutes in length, were provided over a 6-month period at approximately weekly, then fortnightly and monthly intervals"

That sounds like a pretty expensive way of getting next to no effect.

"All aspects of treatment, including discussion and advice, were individualised as per normal five-element acupuncture practice. In this approach, the acupuncturist takes an in-depth account of the patient’s current symptoms and medical history, as well as general health and lifestyle issues. The patient’s condition is explained in terms of an imbalance in one of the five elements, which then causes an imbalance in the whole person. Based on this elemental diagnosis, appropriate points are used to rebalance this element and address not only the presenting conditions, but the person as a whole".

Does this mean that the patients were told a lot of mumbo jumbo about “five elements” (fire earth, metal, water, wood)? If so, anyone with any sense would probably have run a mile from the trial.

"Hypotheses directed at the effect of the needling component of acupuncture consultations require sham-acupuncture controls which while appropriate for formulaic needling for single well-defined conditions, have been shown to be problematic when dealing with multiple or complex conditions, because they interfere with the participative patient–therapist interaction on which the individualised treatment plan is developed. 37–39 Pragmatic trials, on the other hand, are appropriate for testing hypotheses that are directed at the effect of the complex intervention as a whole, while providing no information about the relative effect of different components."

Put simply that means: we don’t use sham acupuncture controls so we can’t distinguish an effect of the needles from placebo effects, or get-better-anyway effects.

"Strengths and limitations: The ‘black box’ study design precludes assigning the benefits of this complex intervention to any one component of the acupuncture consultations, such as the needling or the amount of time spent with a healthcare professional."

"This design was chosen because, without a promise of accessing the acupuncture treatment, major practical and ethical problems with recruitment and retention of participants were anticipated. This is because these patients have very poor self-reported health (Table 3), have not been helped by conventional treatment, and are particularly desperate for alternative treatment options.".

It’s interesting that the patients were “desperate for alternative treatment”. Again it seems that every opportunity has been given to maximise non-specific placebo, and get-well-anyway effects.

There is a lot of statistical analysis and, unsurprisingly, many of the differences don’t reach statistical significance. Some do (just) but that is really quite irrelevant. Even if some of the differences are real (not a result of random variability), a glance at the figures shows that their size is trivial.

My conclusions

(1) This paper, though designed to be susceptible to almost every form of bias, shows staggeringly small effects. It is the best evidence I’ve ever seen that not only are needles ineffective, but that placebo effects, if they are there at all, are trivial in size and have no useful benefit to the patient in this case..

(2) The fact that this paper was published with conclusions that appear to contradict directly what the data show, is as good an illustration as any I’ve seen that peer review is utterly ineffective as a method of guaranteeing quality. Of course the editor should have spotted this. It appears that quality control failed on all fronts.

Follow-up

In the first four days of this post, it got over 10,000 hits (almost 6,000 unique visitors).

Margaret McCartney has written about this too, in The British Journal of General Practice does acupuncture badly.

The Daily Mail exceeds itself in an article by Jenny Hope whch says “Millions of patients with ‘unexplained symptoms’ could benefit from acupuncture on the NHS, it is claimed”. I presume she didn’t read the paper.

The Daily Telegraph scarcely did better in Acupuncture has significant impact on mystery illnesses. The author if this, very sensibly, remains anonymous.

Many “medical information” sites churn out the press release without engaging the brain, but most of the other newspapers appear, very sensibly, to have ignored ther hyped up press release. Among the worst was Pulse, an online magazine for GPs. At least they’ve publish the comments that show their report was nonsense.

The Daily Mash has given this paper a well-deserved spoofing in Made-up medicine works on made-up illnesses.

“Professor Henry Brubaker, of the Institute for Studies, said: “To truly assess the efficacy of acupuncture a widespread double-blind test needs to be conducted over a series of years but to be honest it’s the equivalent of mapping the DNA of pixies or conducting a geological study of Narnia.” ”

There is no truth whatsoever in the rumour being spread on Twitter that I’m Professor Brubaker.

Euan Lawson, also known as Northern Doctor, has done another excellent job on the Paterson paper: BJGP and acupuncture – tabloid medical journalism. Most tellingly, he reproduces the press release from the editor of the BJGP, Professor Roger Jones DM, FRCP, FRCGP, FMedSci.

"Although there are countless reports of the benefits of acupuncture for a range of medical problems, there have been very few well-conducted, randomised controlled trials. Charlotte Paterson’s work considerably strengthens the evidence base for using acupuncture to help patients who are troubled by symptoms that we find difficult both to diagnose and to treat."

Oooh dear. The journal may have a new look, but it would be better if the editor read the papers before writing press releases. Tabloid journalism seems an appropriate description.

Andy Lewis at Quackometer, has written about this paper too, and put it into historical context. In Of the Imagination, as a Cause and as a Cure of Disorders of the Body. “In 1800, John Haygarth warned doctors how we may succumb to belief in illusory cures. Some modern doctors have still not learnt that lesson”. It’s sad that, in 2011, a medical journal should fall into a trap that was pointed out so clearly in 1800. He also points out the disgracefully inaccurate Press release issued by the Peninsula medical school.

Some tweets

Twitter info 426 clicks on http://bit.ly/mgIQ6e alone at 15.30 on 1 June (and that’s only the hits via twitter). By July 8th this had risen to 1,655 hits via Twitter, from 62 different countries,

@followthelemur Selina

MASSIVE peer review fail by the British Journal of General Practice http://bit.ly/mgIQ6e (via @david_colquhoun)

@david_colquhoun David Colquhoun

Appalling paper in Brit J Gen Practice: Acupuncturists show that acupuncture doesn’t work, but conclude the opposite http://bit.ly/mgIQ6e

Retweeted by gentley1300 and 36 others

@david_colquhoun David Colquhoun.

I deny the Twitter rumour that I’m Professor Henry Brubaker as in Daily Mash http://bit.ly/mt1xhX (just because of http://bit.ly/mgIQ6e )

@brunopichler Bruno Pichler

http://tinyurl.com/3hmvan4 Made-up medicine works on made-up illnesses (me thinks Henry Brubaker is actually @david_colquhoun)

@david_colquhoun David Colquhoun,

HEHE RT @brunopichler: http://tinyurl.com/3hmvan4 Made-up medicine works on made-up illnesses

@psweetman Pauline Sweetman

Read @david_colquhoun’s take on the recent ‘acupuncture effective for unexplained symptoms’ nonsense: bit.ly/mgIQ6e

@bodyinmind Body In Mind

RT @david_colquhoun: ‘Margaret McCartney (GP) also blogged acupuncture nonsense http://bit.ly/j6yP4j My take http://bit.ly/mgIQ6e’

@abritosa ABS

Br J Gen Practice mete a pata na poça: RT @david_colquhoun […] appalling acupuncture nonsense http://bit.ly/j6yP4j http://bit.ly/mgIQ6e

@jodiemadden Jodie Madden

amusing!RT @david_colquhoun: paper in Brit J Gen Practice shows that acupuncture doesn’t work,but conclude the opposite http://bit.ly/mgIQ6e

@kashfarooq Kash Farooq

Unbelievable: acupuncturists show that acupuncture doesn’t work, but conclude the opposite. http://j.mp/ilUALC by @david_colquhoun

@NeilOConnell Neil O’Connell

Gobsmacking spin RT @david_colquhoun: Acupuncturists show that acupuncture doesn’t work, but conclude the opposite http://bit.ly/mgIQ6e

@euan_lawson Euan Lawson (aka Northern Doctor)

Aye too right RT @david_colquhoun @iansample @BenGoldacre Guardian should cover dreadful acupuncture paper http://bit.ly/mgIQ6e

@noahWG Noah Gray

Acupuncturists show that acupuncture doesn’t work, but conclude the opposite, from @david_colquhoun: http://bit.ly/l9KHLv

8 June 2011 I drew the attention of the editor of BJGP to the many comments that have been made on this paper. He assured me that the matter would be discussed at a meeting of the editorial board of the journal. Tonight he sent me the result of this meeting.

|

Subject: BJGP Dear Prof Colquhoun We discussed your emails at yesterday’s meeting of the BJGP Editorial Board, attended by 12 Board members and the Deputy Editor The Board was unanimous in its support for the integrity of the Journal’s peer review process for the Paterson et al paper – which was accepted after revisions were made in response to two separate rounds of comments from two reviewers and myself – and could find no reason either to retract the paper or to release the reviewers’ comments Some Board members thought that the results were presented in an overly positive way; because the study raises questions about research methodology and the interpretation of data in pragmatic trials attempting to measure the effects of complex interventions, we will be commissioning a Debate and Analysis article on the topic. In the meantime we would encourage you to contribute to this debate throught the usual Journal channels Roger Jones Professor Roger Jones MA DM FRCP FRCGP FMedSci FHEA FRSA |

It is one thing to make a mistake, It is quite another thing to refuse to admit it. This reply seems to me to be quite disgraceful.

20 July 2011. The proper version of the story got wider publicity when Margaret McCartney wrote about it in the BMJ. The first rapid response to this article was a lengthy denial by the authors of the obvious conclusion to be drawn from the paper. They merely dig themselves deeper into a hole. The second response was much shorter (and more accurate).

|

Thank you Dr McCartney Richard Watson, General Practitioner The fact that none of the authors of the paper or the editor of BJGP have bothered to try and defend themselves speaks volumes. Like many people I glanced at the report before throwing it away with an incredulous guffaw. You bothered to look into it and refute it – in a real journal. That last comment shows part of the problem with them publishing, and promoting, such drivel. It makes you wonder whether anything they publish is any good, and that should be a worry for all GPs. |

30 July 2011. The British Journal of General Practice has published nine letters that object to this study. Some of them concentrate on problems with the methods. others point out what I believe to be the main point, there us essentially no effect there to be explained. In the public interest, I am posting the responses here [download pdf file]

Thers is also a response from the editor and from the authors. Both are unapologetic. It seems that the editor sees nothing wrong with the peer review process.

I don’t recall ever having come across such incompetence in a journal’s editorial process.

Here’s all he has to say.

|

The BJGP Editorial Board considered this correspondence recently. The Board endorsed the Journal’s peer review process and did not consider that there was a case for retraction of the paper or for releasing the peer reviews. The Board did, however, think that the results of the study were highlighted by the Journal in an overly-positive manner. However,many of the criticisms published above are addressed by the authors themselves in the full paper.

|

If you subscribe to the views of Paterson et al, you may want to buy a T-shirt that has a revised version of the periodic table.

5 August 2011. A meeting with the editor of BJGP

Yesterday I met a member of the editorial board of BJGP. We agreed that the data are fine and should not be retracted. It’s the conclusions that should be retracted. I was also told that the referees’ reports were "bland". In the circumstances that merely confirmed my feeling that the referees failed to do a good job.

Today I met the editor, Roger Jones, himself. He was clearly upset by my comment and I have now changed it to refer to the whole editorial process rather than to him personally. I was told, much to my surprise, that the referees were not acupuncturists but “statisticians”. That I find baffling. It soon became clear that my differences with Professor Jones turned on interpretations of statistics.

It’s true that there were a few comparisons that got below P = 0.05, but the smallest was P = 0.02. The warning signs are there in the Methods section: "all statistical tests were …. deemed to be statistically significant if P < 0.05". This is simply silly -perhaps they should have read Lectures on Biostatistics. Or for a more recent exposition, the XKCD cartoon in which it’s proved that green jelly beans are linked to acne (P = 0.05). They make lots of comparisons but make no allowance for this in the statistics. Figure 2 alone contains 15 different comparisons: it’s not surprising that a few come out "significant", even if you don’t take into account the likelihood of systematic (non-random) errors when comparing final values with baseline values.

Keen though I am on statistics, this is a case where I prefer the eyeball test. It’s so obvious from the Figure that there’s nothing worth talking about happening, it’s a waste of time and money to torture the numbers to get "significant" differences. You have to be a slavish believer in P values to treat a result like that as anything but mildly suggestive. A glance at the Figure shows the effects, if there are any at all, are trivial.

I still maintain that the results don’t come within a million miles of justifying the authors’ stated conclusion “The addition of 12 sessions of five-element acupuncture to usual care resulted in improved health status and wellbeing that was sustained for 12 months.” Therefore I still believe that a proper course would have been to issue a new and more accurate press release. A brief admission that the interpretation was “overly-positive”, in a journal that the public can’t see, simply isn’t enough.

I can’t understand either, why the editorial board did not insist on this being done. If they had done so, it would have been temporarily embarrassing, certainly, but people make mistakes, and it would have blown over. By not making a proper correction to the public, the episode has become a cause célèbre and the reputation oif the journal will suffer permanent harm. This paper is going to be cited for a long time, and not for the reasons the journal would wish.

Misinformation, like that sent to the press, has serious real-life consequences. You can be sure that the paper as it still stands, will be cited by every acupuncturist who’s trying to persuade the Department of Health that he’s a "qualified provider".

There was not much unanimity in the discussion up to this point, Things got better when we talked about what a GP should do when there are no effective options. Roger Jones seemed to think it was acceptable to refer them to an alternative practitioner if that patient wanted it. I maintained that it’s unethical to explain to a patient how medicine works in terms of pre-scientific myths.

I’d have love to have heard the "informed consent" during which "The patient’s condition is explained in terms of imbalance in the five elements which then causes an imbalance in the whole person". If anyone had tried to explain my conditions in terms of my imbalance in my Wood, Water, Fire, Earth and Metal. I’d think they were nuts. The last author. Gerad Kite, runs a private clinic that sells acupuncture for all manner of conditions. You can find his view of science on his web site. It’s condescending and insulting to talk to patients in these terms. It’s the ultimate sort of paternalism. And paternalism is something that’s supposed to be vanishing in medicine. I maintained that this was ethically unacceptable, and that led to a more amicable discussion about the possibility of more honest placebos.

It was good of the editor to meet me in the circumstances. I don’t cast doubt on the honesty of his opinions. I simply disagree with them, both at the statistical level and the ethical level.

30 March 2014

I only just noticed that one of the authors of the paper, Bruce McCallum (who worked as an acupuncturist at Kite’s clinic) appeared in a 2007 Channel 4 News piece. I was a report on the pressure to save money by stopping NHS funding for “unproven and disproved treatments”. McCallum said that scientific evidence was needed to show that acupuncture really worked. Clearly he failed, but to admit that would have affected his income.

Watch the video (McCallum appears near the end).