Monthly Archives: June 2014

The Higher Education Funding Council England (HEFCE) gives money to universities. The allocation that a university gets depends strongly on the periodical assessments of the quality of their research. Enormous amounts if time, energy and money go into preparing submissions for these assessments, and the assessment procedure distorts the behaviour of universities in ways that are undesirable. In the last assessment, four papers were submitted by each principal investigator, and the papers were read.

In an effort to reduce the cost of the operation, HEFCE has been asked to reconsider the use of metrics to measure the performance of academics. The committee that is doing this job has asked for submissions from any interested person, by June 20th.

This post is a draft for my submission. I’m publishing it here for comments before producing a final version for submission.

Draft submission to HEFCE concerning the use of metrics.

I’ll consider a number of different metrics that have been proposed for the assessment of the quality of an academic’s work.

Impact factors

The first thing to note is that HEFCE is one of the original signatories of DORA (http://am.ascb.org/dora/ ). The first recommendation of that document is

:"Do not use journal-based metrics, such as Journal Impact Factors, as a surrogate measure of the quality of individual research articles, to assess an individual scientist’s contributions, or in hiring, promotion, or funding decisions"

.Impact factors have been found, time after time, to be utterly inadequate as a way of assessing individuals, e.g. [1], [2]. Even their inventor, Eugene Garfield, says that. There should be no need to rehearse yet again the details. If HEFCE were to allow their use, they would have to withdraw from the DORA agreement, and I presume they would not wish to do this.

Article citations

Citation counting has several problems. Most of them apply equally to the H-index.

- Citations may be high because a paper is good and useful. They equally may be high because the paper is bad. No commercial supplier makes any distinction between these possibilities. It would not be in their commercial interests to spend time on that, but it’s critical for the person who is being judged. For example, Andrew Wakefield’s notorious 1998 paper, which gave a huge boost to the anti-vaccine movement had had 758 citations by 2012 (it was subsequently shown to be fraudulent).

- Citations take far too long to appear to be a useful way to judge recent work, as is needed for judging grant applications or promotions. This is especially damaging to young researchers, and to people (particularly women) who have taken a career break. The counts also don’t take into account citation half-life. A paper that’s still being cited 20 years after it was written clearly had influence, but that takes 20 years to discover,

- The citation rate is very field-dependent. Very mathematical papers are much less likely to be cited, especially by biologists, than more qualitative papers. For example, the solution of the missed event problem in single ion channel analysis [3,4] was the sine qua non for all our subsequent experimental work, but the two papers have only about a tenth of the number of citations of subsequent work that depended on them.

- Most suppliers of citation statistics don’t count citations of books or book chapters. This is bad for me because my only work with over 1000 citations is my 105 page chapter on methods for the analysis of single ion channels [5], which contained quite a lot of original work. It has had 1273 citations according to Google scholar but doesn’t appear at all in Scopus or Web of Science. Neither do the 954 citations of my statistics text book [6]

- There are often big differences between the numbers of citations reported by different commercial suppliers. Even for papers (as opposed to book articles) there can be a two-fold difference between the number of citations reported by Scopus, Web of Science and Google Scholar. The raw data are unreliable and commercial suppliers of metrics are apparently not willing to put in the work to ensure that their products are consistent or complete.

- Citation counts can be (and already are being) manipulated. The easiest way to get a large number of citations is to do no original research at all, but to write reviews in popular areas. Another good way to have ‘impact’ is to write indecisive papers about nutritional epidemiology. That is not behaviour that should command respect.

- Some branches of science are already facing something of a crisis in reproducibility [7]. One reason for this is the perverse incentives which are imposed on scientists. These perverse incentives include the assessment of their work by crude numerical indices.

- “Gaming” of citations is easy. (If students do it it’s called cheating: if academics do it is called gaming.) If HEFCE makes money dependent on citations, then this sort of cheating is likely to take place on an industrial scale. Of course that should not happen, but it would (disguised, no doubt, by some ingenious bureaucratic euphemisms).

- For example, Scigen is a program that generates spoof papers in computer science, by stringing together plausible phases. Over 100 such papers have been accepted for publication. By submitting many such papers, the authors managed to fool Google Scholar in to awarding the fictitious author an H-index greater than that of Albert Einstein http://en.wikipedia.org/wiki/SCIgen

- The use of citation counts has already encouraged guest authorships and such like marginally honest behaviour. There is no way to tell with an author on a paper has actually made any substantial contribution to the work, despite the fact that some journals ask for a statement about contribution.

- It has been known for 17 years that citation counts for individual papers are not detectably correlated with the impact factor of the journal in which the paper appears [1]. That doesn’t seem to have deterred metrics enthusiasts from using both. It should have done.

Given all these problems, it’s hard to see how citation counts could be useful to the REF, except perhaps in really extreme cases such as papers that get next to no citations over 5 or 10 years.

The H-index

This has all the disadvantages of citation counting, but in addition it is strongly biased against young scientists, and against women. This makes it not worth consideration by HEFCE.

Altmetrics

Given the role given to “impact” in the REF, the fact that altmetrics claim to measure impact might make them seem worthy of consideration at first sight. One problem is that the REF failed to make a clear distinction between impact on other scientists is the field and impact on the public.

Altmetrics measures an undefined mixture of both sorts if impact, with totally arbitrary weighting for tweets, Facebook mentions and so on. But the score seems to be related primarily to the trendiness of the title of the paper. Any paper about diet and health, however poor, is guaranteed to feature well on Twitter, as will any paper that has ‘penis’ in the title.

It’s very clear from the examples that I’ve looked at that few people who tweet about a paper have read more than the title. See Why you should ignore altmetrics and other bibliometric nightmares [8].

In most cases, papers were promoted by retweeting the press release or tweet from the journal itself. Only too often the press release is hyped-up. Metrics not only corrupt the behaviour of academics, but also the behaviour of journals. In the cases I’ve examined, reading the papers revealed that they were particularly poor (despite being in glamour journals): they just had trendy titles [8].

There could even be a negative correlation between the number of tweets and the quality of the work. Those who sell altmetrics have never examined this critical question because they ignore the contents of the papers. It would not be in their commercial interests to test their claims if the result was to show a negative correlation. Perhaps the reason why they have never tested their claims is the fear that to do so would reduce their income.

Furthermore you can buy 1000 retweets for $8.00 http://followers-and-likes.com/twitter/buy-twitter-retweets/ That’s outright cheating of course, and not many people would go that far. But authors, and journals, can do a lot of self-promotion on twitter that is totally unrelated to the quality of the work.

It’s worth noting that much good engagement with the public now appears on blogs that are written by scientists themselves, but the 3.6 million views of my blog do not feature in altmetrics scores, never mind Scopus or Web of Science. Altmetrics don’t even measure public engagement very well, never mind academic merit.

Evidence that metrics measure quality

Any metric would be acceptable only if it measured the quality of a person’s work. How could that proposition be tested? In order to judge this, one would have to take a random sample of papers, and look at their metrics 10 or 20 years after publication. The scores would have to be compared with the consensus view of experts in the field. Even then one would have to be careful about the choice of experts (in fields like alternative medicine for example, it would be important to exclude people whose living depended on believing in it). I don’t believe that proper tests have ever been done (and it isn’t in the interests of those who sell metrics to do it).

The great mistake made by almost all bibliometricians is that they ignore what matters most, the contents of papers. They try to make inferences from correlations of metric scores with other, equally dubious, measures of merit. They can’t afford the time to do the right experiment if only because it would harm their own “productivity”.

The evidence that metrics do what’s claimed for them is almost non-existent. For example, in six of the ten years leading up to the 1991 Nobel prize, Bert Sakmann failed to meet the metrics-based publication target set by Imperial College London, and these failures included the years in which the original single channel paper was published [9] and also the year, 1985, when he published a paper [10] that was subsequently named as a classic in the field [11]. In two of these ten years he had no publications whatsoever. See also [12].

Application of metrics in the way that it’s been done at Imperial and also at Queen Mary College London, would result in firing of the most original minds.

Gaming and the public perception of science

Every form of metric alters behaviour, in such a way that it becomes useless for its stated purpose. This is already well-known in economics, where it’s know as Goodharts’s law http://en.wikipedia.org/wiki/Goodhart’s_law “"When a measure becomes a target, it ceases to be a good measure”. That alone is a sufficient reason not to extend metrics to science. Metrics have already become one of several perverse incentives that control scientists’ behaviour. They have encouraged gaming, hype, guest authorships and, increasingly, outright fraud [13].

The general public has become aware of this behaviour and it is starting to do serious harm to perceptions of all science. As long ago as 1999, Haerlin & Parr [14] wrote in Nature, under the title How to restore Public Trust in Science,

“Scientists are no longer perceived exclusively as guardians of objective truth, but also as smart promoters of their own interests in a media-driven marketplace.”

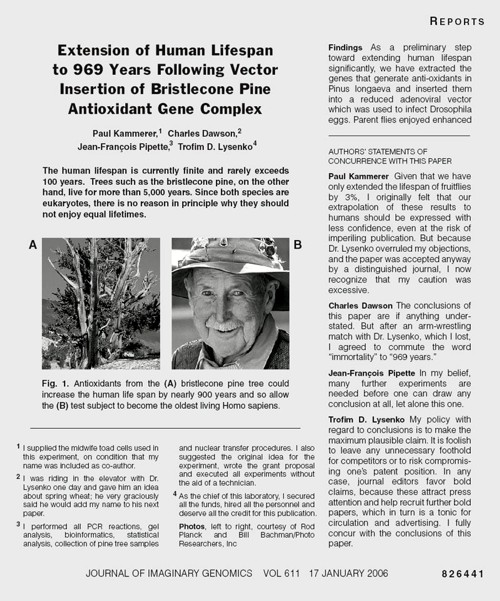

And in January 17, 2006, a vicious spoof on a Science paper appeared, not in a scientific journal, but in the New York Times. See https://www.dcscience.net/?p=156

The use of metrics would provide a direct incentive to this sort of behaviour. It would be a tragedy not only for people who are misjudged by crude numerical indices, but also a tragedy for the reputation of science as a whole.

Conclusion

There is no good evidence that any metric measures quality, at least over the short time span that’s needed for them to be useful for giving grants or deciding on promotions). On the other hand there is good evidence that use of metrics provides a strong incentive to bad behaviour, both by scientists and by journals. They have already started to damage the public perception of science of the honesty of science.

The conclusion is obvious. Metrics should not be used to judge academic performance.

What should be done?

If metrics aren’t used, how should assessment be done? Roderick Floud was president of Universities UK from 2001 to 2003. He’s is nothing if not an establishment person. He said recently:

“Each assessment costs somewhere between £20 million and £100 million, yet 75 per cent of the funding goes every time to the top 25 universities. Moreover, the share that each receives has hardly changed during the past 20 years.

It is an expensive charade. Far better to distribute all of the money through the research councils in a properly competitive system.”

The obvious danger of giving all the money to the Research Councils is that people might be fired solely because they didn’t have big enough grants. That’s serious -it’s already happened at Kings College London, Queen Mary London and at Imperial College. This problem might be ameliorated if there were a maximum on the size of grants and/or on the number of papers a person could publish, as I suggested at the open data debate. And it would help if univerities appointed vice-chancellors with a better long term view than most seem to have at the moment.

Aggregate metrics? It’s been suggested that the problems are smaller if one looks at aggregated metrics for a whole department. rather than the metrics for individual people. Clearly looking at departments would average out anomalies. The snag is that it wouldn’t circumvent Goodhart’s law. If the money depended on the aggregate score, it would still put great pressure on universities to recruit people with high citations, regardless of the quality of their work, just as it would if individuals were being assessed. That would weigh against thoughtful people (and not least women).

The best solution would be to abolish the REF and give the money to research councils, with precautions to prevent people being fired because their research wasn’t expensive enough. If politicians insist that the "expensive charade" is to be repeated, then I see no option but to continue with a system that’s similar to the present one: that would waste money and distract us from our job.

1. Seglen PO (1997) Why the impact factor of journals should not be used for evaluating research. British Medical Journal 314: 498-502. [Download pdf]

2. Colquhoun D (2003) Challenging the tyranny of impact factors. Nature 423: 479. [Download pdf]

3. Hawkes AG, Jalali A, Colquhoun D (1990) The distributions of the apparent open times and shut times in a single channel record when brief events can not be detected. Philosophical Transactions of the Royal Society London A 332: 511-538. [Get pdf]

4. Hawkes AG, Jalali A, Colquhoun D (1992) Asymptotic distributions of apparent open times and shut times in a single channel record allowing for the omission of brief events. Philosophical Transactions of the Royal Society London B 337: 383-404. [Get pdf]

5. Colquhoun D, Sigworth FJ (1995) Fitting and statistical analysis of single-channel records. In: Sakmann B, Neher E, editors. Single Channel Recording. New York: Plenum Press. pp. 483-587.

6. David Colquhoun on Google Scholar. Available: http://scholar.google.co.uk/citations?user=JXQ2kXoAAAAJ&hl=en17-6-2014

7. Ioannidis JP (2005) Why most published research findings are false. PLoS Med 2: e124.[full text]

8. Colquhoun D, Plested AJ Why you should ignore altmetrics and other bibliometric nightmares. Available: https://www.dcscience.net/?p=6369

9. Neher E, Sakmann B (1976) Single channel currents recorded from membrane of denervated frog muscle fibres. Nature 260: 799-802.

10. Colquhoun D, Sakmann B (1985) Fast events in single-channel currents activated by acetylcholine and its analogues at the frog muscle end-plate. J Physiol (Lond) 369: 501-557. [Download pdf]

11. Colquhoun D (2007) What have we learned from single ion channels? J Physiol 581: 425-427.[Download pdf]

12. Colquhoun D (2007) How to get good science. Physiology News 69: 12-14. [Download pdf] See also https://www.dcscience.net/?p=182

13. Oransky, I. Retraction Watch. Available: http://retractionwatch.com/18-6-2014

14. Haerlin B, Parr D (1999) How to restore public trust in science. Nature 400: 499. 10.1038/22867 [doi].[Get pdf]

Follow-up

Some other posts on this topic

Why Metrics Cannot Measure Research Quality: A Response to the HEFCE Consultation

Gaming Google Scholar Citations, Made Simple and Easy

Manipulating Google Scholar Citations and Google Scholar Metrics: simple, easy and tempting

Driving Altmetrics Performance Through Marketing

Death by Metrics (October 30, 2013)

Not everything that counts can be counted

Using metrics to assess research quality By David Spiegelhalter “I am strongly against the suggestion that peer–review can in any way be replaced by bibliometrics”

1 July 2014

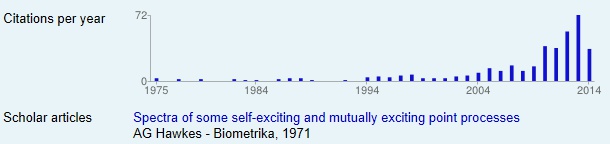

My brilliant statistical colleague, Alan Hawkes, not only laid the foundations for single molecule analysis (and made a career for me) . Before he got into that, he wrote a paper, Spectra of some self-exciting and mutually exciting point processes, (Biometrika 1971). In that paper he described a sort of stochastic process now known as a Hawkes process. In the simplest sort of stochastic process, the Poisson process, events are independent of each other. In a Hawkes process, the occurrence of an event affects the probability of another event occurring, so, for example, events may occur in clusters. Such processes were used for many years to describe the occurrence of earthquakes. More recently, it’s been noticed that such models are useful in finance, marketing, terrorism, burglary, social media, DNA analysis, and to describe invasive banana trees. The 1971 paper languished in relative obscurity for 30 years. Now the citation rate has shot threw the roof.

The papers about Hawkes processes are mostly highly mathematical. They are not the sort of thing that features on twitter. They are serious science, not just another ghastly epidemiological survey of diet and health. Anybody who cites papers of this sort is likely to be a real scientist. The surge in citations suggests to me that the 1971 paper was indeed an important bit of work (because the citations will be made by serious people). How does this affect my views about the use of citations? It shows that even highly mathematical work can achieve respectable citation rates, but it may take a long time before their importance is realised. If Hawkes had been judged by citation counting while he was applying for jobs and promotions, he’d probably have been fired. If his department had been judged by citations of this paper, it would not have scored well. It takes a long time to judge the importance of a paper and that makes citation counting almost useless for decisions about funding and promotion.

Stop press. Financial report casts doubt on Trainor’s claims

Science has a big problem. Most jobs are desperately insecure. It’s hard to do long term thorough work when you don’t know whether you’ll be able to pay your mortgage in a year’s time. The appalling career structure for young scientists has been the subject of much writing by the young (e.g. Jenny Rohn) and the old, e.g Bruce Alberts. Peter Lawrence (see also Real Lives and White Lies in the Funding of Scientific Research, and by me.

Until recently, this problem was largely restricted to post-doctoral fellows (postdocs). They already have PhDs and they are the people who do most of the experiments. Often large numbers of them work for a single principle investigator (PI). The PI spends most of his her time writing grant applications and traveling the world to hawk the wares of his lab. They also (to variable extents) teach students and deal with endless hassle from HR.

The salaries of most postdocs are paid from grants that last for three or sometimes five years. If that grant doesn’t get renewed. they are on the streets.

Universities have come to exploit their employees almost as badly as Amazon does.

The periodical research assessments not only waste large amounts of time and money, but they have distorted behaviour. In the hope of scoring highly, they recruit a lot of people before the submission, but as soon as that’s done with, they find that they can’t afford all of them, so some get cast aside like worn out old boots. Universities have allowed themselves to become dependent on "soft money" from grant-giving bodies. That strikes me as bad management.

The situation is even worse in the USA where most teaching staff rely on research grants to pay their salaries.

I have written three times about the insane methods that are being used to fire staff at Queen Mary College London (QMUL).

Is Queen Mary University of London trying to commit scientific suicide? (June 2012)

Queen Mary, University of London in The Times. Does Simon Gaskell care? (July 2012) and a version of it appeared th The Times (Thunderer column)

In which Simon Gaskell, of Queen Mary, University of London, makes a cock-up (August 2012)

The ostensible reason given there was to boost its ratings in university rankings. Their vice-chancellor, Simon Gaskell, seems to think that by firing people he can produce a university that’s full of Nobel prize-winners. The effect, of course, is just the opposite. Treating people like pawns in a game makes the good people leave and only those who can’t get a job with a better employer remain. That’s what I call bad management.

At QMUL people were chosen to be fired on the basis of a plain silly measure of their publication record, and by their grant income. That was combined with terrorisation of any staff who spoke out about the process (more on that coming soon).

Kings College London is now doing the same sort of thing. They have announced that they’ll fire 120 of the 777 staff in the schools of medicine and biomedical sciences, and the Institute of Psychiatry. These are humans, with children and mortgages to pay. One might ask why they were taken on the first place, if the university can’t afford them. That’s simply bad financial planning (or was it done in order to boost their Research Excellence submission?).

Surely it’s been obvious, at least since 2007, that hard financial times were coming, but that didn’t dent the hubris of the people who took an so many staff. HEFCE has failed to find a sensible way to fund universities. The attempt to separate the funding of teaching and research has just led to corruption.

The way in which people are to be chosen for the firing squad at Kings is crude in the extreme. If you are a professor at the Institute of Psychiatry then, unless you do a lot of teaching, you must have a grant income of at least £200,000 per year. You can read all the details in the Kings’ “Consultation document” that was sent to all employees. It’s headed "CONFIDENTIAL – Not for further circulation". Vice-chancellors still don’t seem to have realised that it’s no longer possible to keep things like this secret. In releasing it, I take ny cue from George Orwell.

"Journalism is printing what someone else does not want printed: everything else is public relations.”

There is no mention of the quality of your research, just income. Since in most sorts of research, the major cost is salaries, this rewards people who take on too many employees. Only too frequently, large groups are the ones in which students and research staff get the least supervision, and which bangs per buck are lowest. The university should be rewarding people who are deeply involved in research themselves -those with small groups. Instead, they are doing exactly the opposite.

Women are, I’d guess, less susceptible to the grandiosity of the enormous research group, so no doubt they will suffer disproportionately. PhD students will also suffer if their supervisor is fired while they are halfway through their projects.

An article in Times Higher Education pointed out

"According to the Royal Society’s 2010 report The Scientific Century: Securing our Future Prosperity, in the UK, 30 per cent of science PhD graduates go on to postdoctoral positions, but only around 4 per cent find permanent academic research posts. Less than half of 1 per cent of those with science doctorates end up as professors."

The panel that decides whether you’ll be fired consists of Professor Sir Robert Lechler, Professor Anne Greenough, Professor Simon Howell, Professor Shitij Kapur, Professor Karen O’Brien, Chris Mottershead, Rachel Parr & Carol Ford. If they had the slightest integrity, they’d refuse to implement such obviously silly criteria.

Universities in general. not only Kings and QMUL have become over-reliant on research funders to enhance their own reputations. PhD students and research staff are employed for the benefit of the university (and of the principle investigator), not for the benefit of the students or research staff, who are treated as expendable cost units, not as humans.

One thing that we expect of vice-chancellors is sensible financial planning. That seems to have failed at Kings. One would also hope that they would understand how to get good science. My only previous encounter with Kings’ vice chancellor, Rick Trainor, suggests that this is not where his talents lie. While he was president of the Universities UK (UUK), I suggested to him that degrees in homeopathy were not a good idea. His response was that of the true apparatchik.

“. . . degree courses change over time, are independently assessed for academic rigour and quality and provide a wider education than the simple description of the course might suggest”

That is hardly a response that suggests high academic integrity.

The students’ petition is on Change.org.

Follow-up

The problems that are faced in the UK are very similar to those in the USA. They have been described with superb clarity in “Rescuing US biomedical research from its systemic flaws“, This article, by Bruce Alberts, Marc W. Kirschner, Shirley Tilghman, and Harold Varmus, should be read by everyone. They observe that ” . . . little has been done to reform the system, primarily because it continues to benefit more established and hence more influential scientists”. I’d be more impressed by the senior people at Kings if they spent time trying to improve the system rather than firing people because their research is not sufficiently expensive.

10 June 2014

Progress on the cull, according to an anonymous correspondent

“The omnishambles that is KCL management

1) We were told we would receive our orange (at risk) or green letters (not at risk, this time) on Thursday PM 5th June as HR said that it’s not good to get bad news on a Friday!

2) We all got a letter on Friday that we would not be receiving our letters until Monday, so we all had a tense weekend

3) I finally got my letter on Monday, in my case it was “green” however a number of staff who work very hard at KCL doing teaching and research are “orange”, un bloody believable

As you can imagine the moral at King’s has dropped through the floor”

18 June 2014

Dorothy Bishop has written about the Trainor problem. Her post ends “One feels that if KCL were falling behind in a boat race, they’d respond by throwing out some of the rowers”.

The students’ petition can be found on the #KCLHealthSOS site. There is a reply to the petition, from Professor Sir Robert Lechler, and a rather better written response to it from students. Lechler’s response merely repeats the weasel words, and it attacks a few straw men without providing the slightest justification for the criteria that are being used to fire people. One can’t help noticing how often knighthoods go too the best apparatchiks rather than the best scientists.

14 July 2014

A 2013 report on Kings from Standard & Poor’s casts doubt on Trainor’s claims

Download the report from Standard and Poor’s Rating Service

A few things stand out.

- KCL is in a strong financial position with lower debt than other similar Universities and cash reserves of £194 million.

- The report says that KCL does carry some risk into the future especially that related to its large capital expansion program.

- The report specifically warns KCL over the consequences of any staff cuts. Particularly relevant are the following quotations

- Page p3 “Further staff-cost curtailment will be quite difficult …pressure to maintain its academic and non-academic service standards will weigh on its ability to cut costs further.”

- page 4 The report goes on to say (see the section headed outlook, especially the final paragraph) that any decrease in KCL’s academic reputation (e.g. consequent on staff cuts) would be likely to impair its ability to attract overseas students and therefore adversely affect its financial position.

- page 10 makes clear that KCL managers are privately aiming at 10% surplus, above the 6% operating surplus they talk about with us. However, S&P considers that ‘ambitious’. In other words KCL are shooting for double what a credit rating agency considers realistic.

One can infer from this that

- what staff have been told about the cuts being an immediate necessity is absolute nonsense

- KCL was warned against staff cuts by a credit agency

- the main problem KCL has is its overambitious building policy

- KCL is implementing a policy (staff cuts) which S & P warned against as they predict it may result in diminishing income.

What on earth is going on?

16 July 2014

I’ve been sent yet another damning document. The BMA’s response to Kings contains some numbers that seem to have escaped the attention of managers at Kings.

10 April 2015

King’s draft performance management plan for 2015

This document has just come to light (the highlighting is mine).

It’s labelled as "released for internal consultation". It seems that managers are slow to realise that it’s futile to try to keep secrets.

The document applies only to Institute of Psychiatry, Psychology and Neuroscience at King’s College London: "one of the global leaders in the fields" -the usual tedious blah that prefaces every document from every university.

It’s fascinating to me that the most cruel treatment of staff so often seems to arise in medical-related areas. I thought psychiatrists, of all people, were meant to understand people, not to kill them.

This document is not quite as crude as Imperial’s assessment, but it’s quite bad enough. Like other such documents, it pretends that it’s for the benefit of its victims. In fact it’s for the benefit of willy-waving managers who are obsessed by silly rankings.

Here are some of the sillier bits.

"The Head of Department is also responsible for ensuring that aspects of reward/recognition and additional support that are identified are appropriately followed through"

And, presumably, for firing people, but let’s not mention that.

"Academics are expected to produce original scientific publications of the highest quality that will significantly advance their field."

That’s what everyone has always tried to do. It can’t be compelled by performance managers. A large element of success is pure luck. That’s why they’re called experiments.

" However, it may take publications 12-18 months to reach a stable trajectory of citations, therefore, the quality of a journal (impact factor) and the judgment of knowledgeable peers can be alternative indicators of excellence."

It can also take 40 years for work to be cited. And there is little reason to believe that citations, especially those within 12-18 months, measure quality. And it is known for sure that "the quality of a journal (impact factor)" does not correlate with quality (or indeed with citations).

Later we read

"H Index and Citation Impact: These are good objective measures of the scientific impact of

publications"

NO, they are simply not a measure of quality (though this time they say “impact” rather than “excellence”).

The people who wrote that seem to be unaware of the most basic facts about science.

Then

"Carrying out high quality scientific work requires research teams"

Sometimes it does, sometimes it doesn’t. In the past the best work has been done by one or two people. In my field, think of Hodgkin & Huxley, Katz & Miledi or Neher & Sakmann. All got Nobel prizes. All did the work themselves. Performance managers might well have fired them before they got started.

By specifying minimum acceptable group sizes, King’s are really specifying minimum acceptable grant income, just like Imperial and Warwick. Nobody will be taken in by the thin attempt to disguise it.

The specification that a professor should have "Primary supervision of three or more PhD students, with additional secondary supervision." is particularly iniquitous. Everyone knows that far too many PhDs are being produced for the number of jobs that are available. This stipulation is not for the benefit of the young. It’s to ensure a supply of cheap labour to churn out more papers and help to lift the university’s ranking.

The document is not signed, but the document properties name its author. But she’s not a scientist and is presumably acting under orders, so please don’t blame her for this dire document. Blame the vice-chancellor.

Performance management is a direct incentive to do shoddy short-cut science.

No wonder that The Economist says "scientists are doing too much trusting and not enough verifying—to the detriment of the whole of science, and of humanity".

Feel ashamed.