This discussion seemed to be of sufficient general interest that we submitted is as a feature to eLife, because this journal is one of the best steps into the future of scientific publishing. Sadly the features editor thought that " too much of the article is taken up with detailed criticisms of research papers from NEJM and Science that appeared in the altmetrics top 100 for 2013; while many of these criticisms seems valid, the Features section of eLife is not the venue where they should be published". That’s pretty typical of what most journals would say. It is that sort of attitude that stifles criticism, and that is part of the problem. We should be encouraging post-publication peer review, not suppressing it. Luckily, thanks to the web, we are now much less constrained by journal editors than we used to be.

Here it is.

Scientists don’t count: why you should ignore altmetrics and other bibliometric nightmares

David Colquhoun1 and Andrew Plested2

1 University College London, Gower Street, London WC1E 6BT

2 Leibniz-Institut für Molekulare Pharmakologie (FMP) & Cluster of Excellence NeuroCure, Charité Universitätsmedizin,Timoféeff-Ressowsky-Haus, Robert-Rössle-Str. 10, 13125 Berlin Germany.

Jeffrey Beall is librarian at Auraria Library, University of Colorado Denver. Although not a scientist himself, he, more than anyone, has done science a great service by listing the predatory journals that have sprung up in the wake of pressure for open access. In August 2012 he published “Article-Level Metrics: An Ill-Conceived and Meretricious Idea. At first reading that criticism seemed a bit strong. On mature consideration, it understates the potential that bibliometrics, altmetrics especially, have to undermine both science and scientists.

Altmetrics is the latest buzzword in the vocabulary of bibliometricians. It attempts to measure the “impact” of a piece of research by counting the number of times that it’s mentioned in tweets, Facebook pages, blogs, YouTube and news media. That sounds childish, and it is. Twitter is an excellent tool for journalism. It’s good for debunking bad science, and for spreading links, but too brief for serious discussions. It’s rarely useful for real science.

Surveys suggest that the great majority of scientists do not use twitter (7 — 13%). Scientific works get tweeted about mostly because they have titles that contain buzzwords, not because they represent great science.

What and who is Altmetrics for?

The aims of altmetrics are ambiguous to the point of dishonesty; they depend on whether the salesperson is talking to a scientist or to a potential buyer of their wares.

At a meeting in London , an employee of altmetric.com said “we measure online attention surrounding journal articles” “we are not measuring quality …” “this whole altmetrics data service was born as a service for publishers”, “it doesn’t matter if you got 1000 tweets . . .all you need is one blog post that indicates that someone got some value from that paper”.

These ideas sound fairly harmless, but in stark contrast, Jason Priem (an author of the altmetrics manifesto) said one advantage of altmetrics is that it’s fast “Speed: months or weeks, not years: faster evaluations for tenure/hiring”. Although conceivably useful for disseminating preliminary results, such speed isn’t important for serious science (the kind that ought to be considered for tenure) which operates on the timescale of years. Priem also says “researchers must ask if altmetrics really reflect impact” . Even he doesn’t know, yet altmetrics services are being sold to universities, before any evaluation of their usefulness has been done, and universities are buying them. The idea that altmetrics scores could be used for hiring is nothing short of terrifying.

The problem with bibliometrics

The mistake made by all bibliometricians is that they fail to consider the content of papers, because they have no desire to understand research. Bibliometrics are for people who aren’t prepared to take the time (or lack the mental capacity) to evaluate research by reading about it, or in the case of software or databases, by using them. The use of surrogate outcomes in clinical trials is rightly condemned. Bibliometrics are all about surrogate outcomes.

If instead we consider the work described in particular papers that most people agree to be important (or that everyone agrees to be bad), it’s immediately obvious that no publication metrics can measure quality. There are some examples in How to get good science (Colquhoun, 2007). It is shown there that at least one Nobel prize winner failed dismally to fulfil arbitrary biblometric productivity criteria of the sort imposed in some universities (another example is in Is Queen Mary University of London trying to commit scientific suicide?).

Schekman (2013) has said that science

“is disfigured by inappropriate incentives. The prevailing structures of personal reputation and career advancement mean the biggest rewards often follow the flashiest work, not the best.”

Bibliometrics reinforce those inappropriate incentives. A few examples will show that altmetrics are one of the silliest metrics so far proposed.

The altmetrics top 100 for 2103

The superficiality of altmetrics is demonstrated beautifully by the list of the 100 papers with the highest altmetric scores in 2013 For a start, 58 of the 100 were behind paywalls, and so unlikely to have been read except (perhaps) by academics.

The second most popular paper (with the enormous altmetric score of 2230) was published in the New England Journal of Medicine. The title was Primary Prevention of Cardiovascular Disease with a Mediterranean Diet. It was promoted (inaccurately) by the journal with the following tweet:

Many of the 2092 tweets related to this article simply gave the title, but inevitably the theme appealed to diet faddists, with plenty of tweets like the following:

The interpretations of the paper promoted by these tweets were mostly desperately inaccurate. Diet studies are anyway notoriously unreliable. As John Ioannidis has said

"Almost every single nutrient imaginable has peer reviewed publications associating it with almost any outcome."

This sad situation comes about partly because most of the data comes from non-randomised cohort studies that tell you nothing about causality, and also because the effects of diet on health seem to be quite small.

The study in question was a randomized controlled trial, so it should be free of the problems of cohort studies. But very few tweeters showed any sign of having read the paper. When you read it you find that the story isn’t so simple. Many of the problems are pointed out in the online comments that follow the paper. Post-publication peer review really can work, but you have to read the paper. The conclusions are pretty conclusively demolished in the comments, such as:

“I’m surrounded by olive groves here in Australia and love the hand-pressed EVOO [extra virgin olive oil], which I can buy at a local produce market BUT this study shows that I won’t live a minute longer, and it won’t prevent a heart attack.”

We found no tweets that mentioned the finding from the paper that the diets had no detectable effect on myocardial infarction, death from cardiovascular causes, or death from any cause. The only difference was in the number of people who had strokes, and that showed a very unimpressive P = 0.04.

Neither did we see any tweets that mentioned the truly impressive list of conflicts of interest of the authors, which ran to an astonishing 419 words.

“Dr. Estruch reports serving on the board of and receiving lecture fees from the Research Foundation on Wine and Nutrition (FIVIN); serving on the boards of the Beer and Health Foundation and the European Foundation for Alcohol Research (ERAB); receiving lecture fees from Cerveceros de España and Sanofi-Aventis; and receiving grant support through his institution from Novartis. Dr. Ros reports serving on the board of and receiving travel support, as well as grant support through his institution, from the California Walnut Commission; serving on the board of the Flora Foundation (Unilever). . . “

And so on, for another 328 words.

The interesting question is how such a paper came to be published in the hugely prestigious New England Journal of Medicine. That it happened is yet another reason to distrust impact factors. It seems to be another sign that glamour journals are more concerned with trendiness than quality.

One sign of that is the fact that the journal’s own tweet misrepresented the work. The irresponsible spin in this initial tweet from the journal started the ball rolling, and after this point, the content of the paper itself became irrelevant. The altmetrics score is utterly disconnected from the science reported in the paper: it more closely reflects wishful thinking and confirmation bias.

The fourth paper in the altmetrics top 100 is an equally instructive example.

|

This work was also published in a glamour journal, Science. The paper claimed that a function of sleep was to “clear metabolic waste from the brain”. It was initially promoted (inaccurately) on Twitter by the publisher of Science. After that, the paper was retweeted many times, presumably because everybody sleeps, and perhaps because the title hinted at the trendy, but fraudulent, idea of “detox”. Many tweets were variants of “The garbage truck that clears metabolic waste from the brain works best when you’re asleep”. |

But this paper was hidden behind Science’s paywall. It’s bordering on irresponsible for journals to promote on social media papers that can’t be read freely. It’s unlikely that anyone outside academia had read it, and therefore few of the tweeters had any idea of the actual content, or the way the research was done. Nevertheless it got “1,479 tweets from 1,355 accounts with an upper bound of 1,110,974 combined followers”. It had the huge Altmetrics score of 1848, the highest altmetric score in October 2013.

Within a couple of days, the story fell out of the news cycle. It was not a bad paper, but neither was it a huge breakthrough. It didn’t show that naturally-produced metabolites were cleared more quickly, just that injected substances were cleared faster when the mice were asleep or anaesthetised. This finding might or might not have physiological consequences for mice.

Worse, the paper also claimed that “Administration of adrenergic antagonists induced an increase in CSF tracer influx, resulting in rates of CSF tracer influx that were more comparable with influx observed during sleep or anesthesia than in the awake state”. Simply put, giving the sleeping mice a drug could reduce the clearance to wakeful levels. But nobody seemed to notice the absurd concentrations of antagonists that were used in these experiments: “adrenergic receptor antagonists (prazosin, atipamezole, and propranolol, each 2 mM) were then slowly infused via the cisterna magna cannula for 15 min”. Use of such high concentrations is asking for non-specific effects. The binding constant (concentration to occupy half the receptors) for prazosin is less than 1 nM, so infusing 2 mM is working at a million times greater than the concentration that should be effective. That’s asking for non-specific effects. Most drugs at this sort of concentration have local anaesthetic effects, so perhaps it isn’t surprising that the effects resembled those of ketamine.

The altmetrics editor hadn’t noticed the problems and none of them featured in the online buzz. That’s partly because to find it out you had to read the paper (the antagonist concentrations were hidden in the legend of Figure 4), and partly because you needed to know the binding constant for prazosin to see this warning sign.

The lesson, as usual, is that if you want to know about the quality of a paper, you have to read it. Commenting on a paper without knowing anything of its content is liable to make you look like an jackass.

A tale of two papers

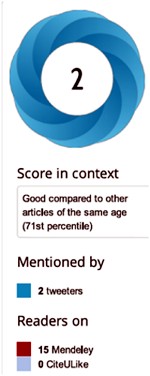

Another approach that looks at individual papers is to compare some of one’s own papers. Sadly, UCL shows altmetric scores on each of your own papers. Mostly they are question marks, because nothing published before 2011 is scored. But two recent papers make an interesting contrast. One is from DC’s side interest in quackery, one was real science. The former has an altmetric score of 169, the latter has an altmetric score of 2.

|

The first paper was “Acupuncture is a theatrical placebo”, which was published as an invited editorial in Anesthesia and Analgesia [download pdf]. The paper was scientifically trivial. It took perhaps a week to write. Nevertheless, it got promoted it on twitter, because anything to do with alternative medicine is interesting to the public. It got quite a lot of retweets. And the resulting altmetric score of 169 put it in the top 1% of all articles altmetric have tracked, and the second highest ever for Anesthesia and Analgesia. As well as the journal’s own website, the article was also posted on the DCScience.net blog (May 30, 2013) where it soon became the most viewed page ever (24,468 views as of 23 November 2013), something that altmetrics does not seem to take into account. |

|

Compare this with the fate of some real, but rather technical, science.

|

My [DC] best scientific papers are too old (i.e. before 2011) to have an altmetrics score, but my best score for any scientific paper is 2. This score was for Colquhoun & Lape (2012) “Allosteric coupling in ligand-gated ion channels”. It was a commentary with some original material. The altmetric score was based on two tweets and 15 readers on Mendeley. The two tweets consisted of one from me (“Real science; The meaning of allosteric conformation changes http://t.co/zZeNtLdU ”). The only other tweet as abusive one from a cyberstalker who was upset at having been refused a job years ago. Incredibly, this modest achievement got it rated “Good compared to other articles of the same age (71st percentile)”. |

|

Conclusions about bibliometrics

Bibliometricians spend much time correlating one surrogate outcome with another, from which they learn little. What they don’t do is take the time to examine individual papers. Doing that makes it obvious that most metrics, and especially altmetrics, are indeed an ill-conceived and meretricious idea. Universities should know better than to subscribe to them.

Although altmetrics may be the silliest bibliometric idea yet, much this criticism applies equally to all such metrics. Even the most plausible metric, counting citations, is easily shown to be nonsense by simply considering individual papers. All you have to do is choose some papers that are universally agreed to be good, and some that are bad, and see how metrics fail to distinguish between them. This is something that bibliometricians fail to do (perhaps because they don’t know enough science to tell which is which). Some examples are given by Colquhoun (2007) (more complete version at dcscience.net).

Eugene Garfield, who started the metrics mania with the journal impact factor (JIF), was clear that it was not suitable as a measure of the worth of individuals. He has been ignored and the JIF has come to dominate the lives of researchers, despite decades of evidence of the harm it does (e.g.Seglen (1997) and Colquhoun (2003) ) In the wake of JIF, young, bright people have been encouraged to develop yet more spurious metrics (of which ‘altmetrics’ is the latest). It doesn’t matter much whether these metrics are based on nonsense (like counting hashtags) or rely on counting links or comments on a journal website. They won’t (and can’t) indicate what is important about a piece of research- its quality.

People say – I can’t be a polymath. Well, then don’t try to be. You don’t have to have an opinion on things that you don’t understand. The number of people who really do have to have an overview, of the kind that altmetrics might purport to give, those who have to make funding decisions about work that they are not intimately familiar with, is quite small. Chances are, you are not one of them. We review plenty of papers and grants. But it’s not credible to accept assignments outside of your field, and then rely on metrics to assess the quality of the scientific work or the proposal.

It’s perfectly reasonable to give credit for all forms of research outputs, not only papers. That doesn’t need metrics. It’s nonsense to suggest that altmetrics are needed because research outputs are not already valued in grant and job applications. If you write a grant for almost any agency, you can put your CV. If you have a non-publication based output, you can always include it. Metrics are not needed. If you write software, get the numbers of downloads. Software normally garners citations anyway if it’s of any use to the greater community.

When AP recently wrote a criticism of Heather Piwowar’s altmetrics note in Nature, one correspondent wrote: "I haven’t read the piece [by HP] but I’m sure you are mischaracterising it". This attitude summarizes the too-long-didn’t-read (TLDR) culture that is increasingly becoming accepted amongst scientists, and which the comparisons above show is a central component of altmetrics.

Altmetrics are numbers generated by people who don’t understand research, for people who don’t understand research. People who read papers and understand research just don’t need them and should shun them.

But all bibliometrics give cause for concern, beyond their lack of utility. They do active harm to science. They encourage “gaming” (a euphemism for cheating). They encourage short-term eye-catching research of questionable quality and reproducibility. They encourage guest authorships: that is, they encourage people to claim credit for work which isn’t theirs. At worst, they encourage fraud.

No doubt metrics have played some part in the crisis of irreproducibility that has engulfed some fields, particularly experimental psychology, genomics and cancer research. Underpowered studies with a high false-positive rate may get you promoted, but tend to mislead both other scientists and the public (who in general pay for the work). The waste of public money that must result from following up badly done work that can’t be reproduced but that was published for the sake of “getting something out” has not been quantified, but must be considered to the detriment of bibliometrics, and sadly overcomes any advantages from rapid dissemination. Yet universities continue to pay publishers to provide these measures, which do nothing but harm. And the general public has noticed.

It’s now eight years since the New York Times brought to the attention of the public that some scientists engage in puffery, cheating and even fraud.

Overblown press releases written by journals, with connivance of university PR wonks and with the connivance of the authors, sometimes go viral on social media (and so score well on altmetrics). Yet another example, from Journal of the American Medical Association involved an overblown press release from the Journal about a trial that allegedly showed a benefit of high doses of Vitamin E for Alzheimer’s disease.

This sort of puffery harms patients and harms science itself.

We can’t go on like this.

What should be done?

Post publication peer review is now happening, in comments on published papers and through sites like PubPeer, where it is already clear that anonymous peer review can work really well. New journals like eLife have open comments after each paper, though authors do not seem to have yet got into the habit of using them constructively. They will.

It’s very obvious that too many papers are being published, and that anything, however bad, can be published in a journal that claims to be peer reviewed . To a large extent this is just another example of the harm done to science by metrics –the publish or perish culture.

Attempts to regulate science by setting “productivity targets” is doomed to do as much harm to science as it has in the National Health Service in the UK. This has been known to economists for a long time, under the name of Goodhart’s law.

Here are some ideas about how we could restore the confidence of both scientists and of the public in the integrity of published work.

- Nature, Science, and other vanity journals should become news magazines only. Their glamour value distorts science and encourages dishonesty.

- Print journals are overpriced and outdated. They are no longer needed. Publishing on the web is cheap, and it allows open access and post-publication peer review. Every paper should be followed by an open comments section, with anonymity allowed. The old publishers should go the same way as the handloom weavers. Their time has passed.

- Web publication allows proper explanation of methods, without the page, word and figure limits that distort papers in vanity journals. This would also make it very easy to publish negative work, thus reducing publication bias, a major problem (not least for clinical trials)

- Publish or perish has proved counterproductive. It seems just as likely that better science will result without any performance management at all. All that’s needed is peer review of grant applications.

- Providing more small grants rather than fewer big ones should help to reduce the pressure to publish which distorts the literature. The ‘celebrity scientist’, running a huge group funded by giant grants has not worked well. It’s led to poor mentoring, and, at worst, fraud. Of course huge groups sometimes produce good work, but too often at the price of exploitation of junior scientists

- There is a good case for limiting the number of original papers that an individual can publish per year, and/or total funding. Fewer but more complete and considered papers would benefit everyone, and counteract the flood of literature that has led to superficiality.

- Everyone should read, learn and inwardly digest Peter Lawrence’s The Mismeasurement of Science.

A focus on speed and brevity (cited as major advantages of altmetrics) will help no-one in the end. And a focus on creating and curating new metrics will simply skew science in yet another unsatisfactory way, and rob scientists of the time they need to do their real job: generate new knowledge.

It has been said

“Creation is sloppy; discovery is messy; exploration is dangerous. What’s a manager to do?

The answer in general is to encourage curiosity and accept failure. Lots of failure.”

And, one might add, forget metrics. All of them.

Follow-up

17 Jan 2014

This piece was noticed by the Economist. Their ‘Writing worth reading‘ section said

"Why you should ignore altmetrics (David Colquhoun) Altmetrics attempt to rank scientific papers by their popularity on social media. David Colquohoun [sic] argues that they are “for people who aren’t prepared to take the time (or lack the mental capacity) to evaluate research by reading about it.”"

20 January 2014.

Jason Priem, of ImpactStory, has responded to this article on his own blog. In Altmetrics: A Bibliographic Nightmare? he seems to back off a lot from his earlier claim (cited above) that altmetrics are useful for making decisions about hiring or tenure. Our response is on his blog.

20 January 2014.

Jason Priem, of ImpactStory, has responded to this article on his own blog, In Altmetrics: A bibliographic Nightmare? he seems to back off a lot from his earlier claim (cited above) that altmetrics are useful for making decisions about hiring or tenure. Our response is on his blog.

23 January 2014

The Scholarly Kitchen blog carried another paean to metrics, A vigorous discussion followed. The general line that I’ve followed in this discussion, and those mentioned below, is that bibliometricians won’t qualify as scientists until they test their methods, i.e. show that they predict something useful. In order to do that, they’ll have to consider individual papers (as we do above). At present, articles by bibliometricians consist largely of hubris, with little emphasis on the potential to cause corruption. They remind me of articles by homeopaths: their aim is to sell a product (sometimes for cash, but mainly to promote the authors’ usefulness).

It’s noticeable that all of the pro-metrics articles cited here have been written by bibliometricians. None have been written by scientists.

28 January 2014.

Dalmeet Singh Chawla,a bibliometrician from Imperial College London, wrote a blog on the topic. (Imperial, at least in its Medicine department, is notorious for abuse of metrics.)

29 January 2014 Arran Frood wrote a sensible article about the metrics row in Euroscientist.

2 February 2014 Paul Groth (a co-author of the Altmetrics Manifesto) posted more hubristic stuff about altmetrics on Slideshare. A vigorous discussion followed.

5 May 2014. Another vigorous discussion on ImpactStory blog, this time with Stacy Konkiel. She’s another non-scientist trying to tell scientists what to do. The evidence that she produced for the usefulness of altmetrics seemed pathetic to me.

7 May 2014 A much-shortened version of this post appeared in the British Medical Journal (BMJ blogs)

May be the answer lies in the comment “We all cannot be polymath” . Right we cannot be. If the authors also write a note on the paper that addresses the general public it will help every one to read and hopefully not distort the research. It will also address the oft complaint that science has become too technical to follow.

I agree. There is a lot to be said for having a plain language summary of papers, at least for those papers that are likely to interest the general public. They problem would be to ensure that the summaries were accurate, not hype. It’s probably too much to hope that either authors or journal editors will give up unjustified hype, as the examples we cite show. The best hope is for post-publication peer review in comments that should follow every paper.

It would help if services such as Google Scholar removed all altmetrics from the service. ‘Cited by x number’ hyper-links could become ‘Cited in’ links, which when clicked would produce a list of articles where the original article is cited. The author pages would merely list the papers by the author, provide links for ‘Cited in’ searches and links to websites where the paper can be found.

Pretty much everything else currently listed on Google Scholar is unimportant for determining whether an article is a good piece of work or not.

David: could you please remove the dreaded reCAPTCHA things from your site?

Apologies, my post above should have stated ‘bibliometrics’ rather than ‘altmetrics’. Google Scholar doesn’t go as far as presenting almetrics, which DC and Andrew Plested thoroughly dismantle here.

First, you only see reCAPTCHA once, I think, when you first register. And comments are moderated for the first one. After that they appear straight away. Even with reCAPTCHA I get many spam registrants which have to be deleted by hand.

I don’t follow your comment about Google scholar. My own entry lists only ‘cited by’, with no sign of altmetrics. It also gives me a far higher H-index (as if anyone cared) than the expensive commercial versions, perhaps because the latter don’t include citations to books (yes, I know it’s hard to imagine such incompetence).

uhuh. the last two comments crossed in cyberspace.

Hi David. Thanks for writing the post! I founded Altmetric.com. I think you and Andrew have some fair points, but wanted to clear up the odd bit of confusion.

I think your underlying point about metrics is fair enough (I am happy to disagree quietly!). You’re conflating metrics, altmetrics and attention though.

Before anything else, to be absolutely, completely clear: I don’t believe that you can tell the quality of a paper from numbers (or tweets). The best way to determine the quality of a paper is to read it. I also happen to agree about post publication review and that too much hype harms science.

So taking that as read:

1) Altmetric.com is a small commercial start-up. It did not invent or coin the name altmetrics, that is the name of the broader research area. The broader field is not particularly commercialized; the other groups are generally non-profits, I just didn’t want to have to apply for grants all the time to work on what I enjoyed.

We’re not “ambiguous to the point of dishonesty”. You just took quotes from two separate groups. It would be nice if you fixed this bit, as it’s quite insulting!

2) One ‘altmetric’ is certainly Twitter, but the point is that there are many. Post publication review sites like Pubpeer, F1000, policy documents, blogs like this one, research highlights articles in journals, saves in Mendeley and the mainstream media are other examples of sources used.

They all have pros and cons. Picking on Twitter isn’t helpful as the whole point of altmetrics is to look at as many different things as possible because any one source is biased towards a certain type of activity.

3) There is no such thing as an altmetrics score. There is certainly an Altmetric.com score, which measures *attention*. We do say this constantly, you even quote us saying it in the first paragraph. I assure you it is something we are very conscious of making sure we get across.

http://support.altmetric.com/knowledgebase/articles/83337-how-is-the-altmetric-score-calculated-

http://support.altmetric.com/knowledgebase/articles/234361-what-s-the-scale-for-the-altmetric-score-

We provide the score as a way in to the qualitative data (i.e. reading blog posts, tweets, news stories, etc). It stems from the fact that the original tools we build were for journal editors and looked at several hundred articles at once. The score and accompanying visualization is a way of prioritizing which article reports to look at first.

As an aside, the wider altmetrics community have generally warned us against the Altmetric.com score, probably because it encourages some people to jump to the wrong conclusion about what it represents…

4) The top 100 is subtitled “Which academic research caught the public imagination in 2013?” and note that this list is “the 100 papers that received the most attention online”.

In case that wasn’t clear, the accompanying blog post linked to at the top of the page says:

“Many of the papers received a huge amount of attention because they related to current events, reflected interesting scientific findings, and or were just plain quirky. (Do keep in mind that this top 100 list indicates which articles received the most buzz, but says nothing about the quality of the research.)”

It is not a ‘best 100 papers’ of the year list. We put it up because they’re interesting papers to read and discuss. You certainly seem to have found them interesting. 😉

5) A valid question would be what altmetrics does do, if it doesn’t measure quality.

The first thing is that they help authors and readers by pulling together all of the discussions, shares and uses of their article in one place. If there’s something useful in there, then great. You quote us explaining this:

“it doesn’t matter if you got 1000 tweets… all you need is one blog post that indicates that someone got some value from that paper”

The point is that if you only look at citations you will miss what other effects your work might have. For readers, you can potentially learn more about the paper by seeing what other people have said about it.

In short, it encourages people to look at more than just citation counts and provides supporting evidence for researchers who need to show that their work is having an impact in different ways.

There’s more on that here:

http://www.altmetric.com/blog/broaden-your-horizons-impact-doesnt-need-to-be-all-about-citations/

Your more general points about metrics being a force for harm of course stand. I’m not going to try and dissuade you of those, it seems like a reasonable opinion to have (I disagree with you, but that’s OK).

I do rather think that you’re arguing the wrong points though. Let’s talk about gaming and what kinds of impact are useful / not useful, or about whether or not creating any kind of new system that isn’t ‘read all the papers’ is just tempting corruption.

It’s not especially useful to talk about whether attention scores measure quality, or whether numbers should take the place of reading somebody’s work – who are you disagreeing with? You will find it a very easy argument to win but it doesn’t accomplish anything.

@euan

Thanks very much for taking the time to reply. We were aware that there is more than one group promoting altmetrics. Perhaps we should have made it more clear that we were concentrating on those supplied by altmetrics.com. I guess that is pretty obvious from the references and Figures. Other companies will doubtless supply different numbers because there are various possible inputs, and because the weighting of different inputs is entirely arbitrary. The fact that there are so many different altmetrics scores is just another reason for ignoring them.

The reason for concentrating on those scores is because they are sold as part of the Symplectic package which is used by UCL and many others. We can’t avoid seeing their scores. Symplectic was started by physics PhD students at Imperial (a place that is notoriously obsessed with metrics). I expect they found it easier than physics. But now it is owned by Macmillan (the people who own Nature) and it is an entirely commercial enterprise.

You don’t explain how it is that Jason Priem (an author of the altmetrics manifesto) said one advantage of altmetrics is that it’s fast “Speed: months or weeks, not years: faster evaluations for tenure/hiring”. The idea that altmetrics would be useful for making decisions about hiring or tenure is entirely inconsistent with the idea that it has nothing to do with quality. I expect he was addressing potential buyers when he said that. It isn’t what altmetrics advocates generally say in public. I see nothing that needs to be retracted about what we said. It’s normal for commercial organisations to say different things to the public and to the people they are trying to sell to, but it isn’t very honest.

I know you say that altmetrics don’t measure quality so your para 5 is important.

It’s always been easy to do that, and it costs nothing. Just use a Google Alert on your own name. That turns up every sort of reference to your work on the web.

I suppose one point of what we wrote is that the information you get from twitter not only fails to measure quality but almost the opposite. It picks up the trendy and sensational results which are just the sort that are most likely to be wrong. And since it’s clear that most people haven’t read the papers (not even the altmetrics editors) there isn’t the slightest reason to tke any notice of them.

I don’t think that scientists actually use any of the methods that you suggest to find papers of interest in their fields. When F1000 started, I declined an invitation to join because I prefer to make up my own mind. When I look at their selections I rarely find much of use for my (admittedly specialist) interests. It’s hard to say exactly what we do use. It’s partly word of mouth, Your friends in the field soon tell you if something useful appears. Partly tables of contents for a handful of specialist journals in your field (for me that would be Journal of Physiology, Journal of General Physiology and Biophysical Journal (not glamour journals). Google searches with appropriate keywords can be useful too. All of these are free. Citations of papers that interest you can also be useful (not citation counts). Google Scholar does that well, and it’s free. It’s also better than ISI, Thompson-Reuters etc because it collects citations to books. That matters for me as is clear from my Scholar entry.

Your most important point is your last one.

In my view any kind of new system that doesn’t involve looking at what is written in a paper inevitably leads to corruption,

Thanks David.

Mmm, it’s not normal for us, though… My point is that Jason doesn’t work for altmetric.com. Neither am I an author or signatory or anything of the altmetrics manifesto (though I do agree with many of the ideas in it and a lot of what it says, obviously).

Jason coined the phrase altmetric. I think he’s got a great vision, is very talented and continues to do a lot for altmetrics in general. But just because he believes something doesn’t mean we do – and vice versa, for example he isn’t a fan of the single altmetric.com score.

That’s not dishonesty – I hope you agree.

Yes, to some degree! But this is basically the service we provide, we try to do a better job at finding and presenting things. That’s the idea anyway.

You don’t pay anything as an end user to see Altmetric.com reports either. They’re sponsored by the publisher, or given free to institutions (in the case of institutional repositories). We do charge for analytics tools.

Yeah, I see your point and that is certainly fair for the topmost results. But it’d be more of a problem if the aim of altmetrics tools was to measure anything by the *volume* of tweets or what have you (other than the level of attention the paper got). It’s not.

Rather the point is to see if those mentions contain anything of value. Sometimes they do – like evidence that work has been read by a particular person, or put into practice somewhere. Sometimes it’s just a share.

The other danger that you didn’t mention is that the attention could all be negative, like the Twitter mentions around the arsenic life paper in Science a while back.

If you try to measure the quality of paper by counting the number of tweets then you’re doing something very very wrong. But nobody does do that, AFAIK.

I respect that view.

@Euan

Thanks again. I appreciate that you, as CEO of altmetric.com, have offered your opinion. Thanks for clarifying that Jason Priem, whom we quote, doesn’t work for altmetric.com.

You say

That’s something you can never tell, but I think it is obvious from the examples we cite that most people have not read the papers they spread. Even if they have downloaded the paper, rather than just tweeted about it, it is certainly no guarantee that they’ve read it.

You are certainly right that in some cases the attention is mostly negative. Look at the large number of citations of Andrew Wakefield’s (now retracted) paper. In cases like that, it is those who do the criticism who deserve credit, not the author of the paper. The fact that no metrics distinguish between positive and negative attention is yet another good reason why they should be ignored.

There’s a lot of discussion about metrics in general, and this post in particular, in progress at the Scholarly Kitchen blog.

David and Andrew, thank you.

I will leave two comments, if I may. This is, because the part of your paper I would mostly complement you for, care about, and perhaps disagree, is the solutions you propose to the problem you have identified. I would like to treat these under separate cover.

I have noted the discussion with the more comprehensive altmetric proponent above. I return to the following two sentences in your article: “The mistake made by all bibliometricians is that they fail to consider the content of papers, because they have no desire to understand research. Bibliometrics are for people who aren’t prepared to take the time (or lack the mental capacity) to evaluate research by reading about it, or in the case of software or databases, by using them.”

I was asked at an Employment Tribunal hearing to demonstrate how it could be argued, as I have claimed, that if such an attitude is taken by a University management it may be in violation of criminal or civil law (my argument was more complex, but let’s leave it to that). I am unsure how I performed in this exercise, as the Judgment is for the time-being reserved and I have had no formal legal training in my life so far.

Here I would just wish to say that in academic circles, it is us who should enforce what is acceptable practice and what not. I shall expand on this on the critique of your proposed solutions. This will have to wait, because both of my young daughters have woken up and I have promised to play with them, now. At least they granted me the time to complete this first post 🙂

Not a solution, really.

Scientists will always face the question as to where their most important communications should be published when they wish a broad audience to learn about them and any venue that presents them with this opportunity will by definition be exclusive.

Also let us consider that if a distorted appraisal system values more the mention of an individual scientist’s contribution in a news-only version of “Nature” rather than her paper in “The Journal of Physiology” we have not moved forward.

Call me old-fashioned, but for me the experience of reading from a computer screen is different to reading from a printout paper, itself different to reading from a Journal volume. As a member of the Genetics Society of America I protested the discontinuation of the print form of its prestigious Journal, whose loss I still mourn. Archiving of paper takes a different form. Concentration works differently as does my imagination. I deal with all types of distractions, including references, alternatively depending on the medium at hand. As a result, a print journal has my preference over an online forum, although I recognize the value of both.

Furthermore, I agree that comments (like this one!) are easier in online format. However, response to a paper is not restricted to post-publication peer review.

Last, but not least, another lesson I learned in January: if you read articles separately from – say – the Times Higher Education, you may fail to appreciate hidden connections that their editorial team conveys by placing different contents on juxtaposing pages. I then checked out other newspapers (in print) and realized the beauty and extra understanding of the day’s news when read in this way.

Both points are relevant, but secondary. When method is more important to the brief message, then a venue for its publication has to be devised (the particular vanity Journals you mentioned have solved this in more than one ways). The bigger problem, in my view, with negative results is that it is harder to substantiate that they are conclusive. If that hurdle is passed, I think publication is simpler.

This comment matters to me because it touches upon two key questions. We need good answers.

1. How (much and in which way) should society support universities and/or research institutes (public or private and of different missions)?

2. How should individuals contributing to science professionally through institutions they join be treated, valued, assessed and supported?

Mexico’s major funding agency, the CONACYT, has exactly this policy. I recommend it and know of no associated disadvantage – only advantages to the scientific community of this country.

What is much more important – I think – is for any institution wishing to support research to guarantee the core-funding (reasonable resources) for doing it.

I don’t think restrictions of this sort would be helpful – nor can I see any way of implementing the suggestion. What matters is the answer to question 2 I posed further up; a good answer will likely lead to a natural acceptance of what you propose for most.

Yes. Thank you to Peter. And renewed thanks to you.

I apologise for the funny format of my critique, the result of a copy-paste function from a word document, however I smile in that it gives an added, if tiny, reason of why I still cherish the more old-fashioned ways of communication.

I also reflect that there is a third question, possibly the hardest of all.

3. How should new members of humanity gain access to academia – what when those wishing to join are many more than those our institutions can support?

Thanks Fanis

I’ve reformatted your comment now.

I don’t understand your point about Nature etc reverting to being news magazines. If, as I hope, altmetrics fades away, it would matter little whether you got a mention in the news. The best comment is usually on blogs now anyway. I suggested a news magazine only for romantic reasons. It would be sort of sad to see a magazine with such a long history vanish altogether.

You say you prefer to read print, but that battle is already lost. It’s simply not a good way to spend a (large amount of) money. If you want paper, just print out the pdf -make your own reprint. The problem now is that the old publishers want to maintain their profits by charging as much for web versions as print. That’s daylight robbery. I suspect that they’ll be competed out of existence.

Journals like PLOS One have already solved the problem of non-publication of negative results. Soon all journals will have to work like that.

You ask how one could enforce a maximum number of publications. We weren’t proposing to make it a contractual agreement. It’s already happening to some extent. The REF allows submission of only four papers (one a year) however big the group is. That’s excellent because if it provides a great incentive to publish few but good papers. If people applied for a job, I’d ask to see their 2, 3 or (at most) 4 best papers, depending on age. If you are a candidate for election to the Royal Society, you are asked to nominate your 20 best papers and having 500 can be a disadvantage. It’s common sense and it’s happening.

The casualties of open access are likely to be the learned societies, which live off the earnings from their journals. The Physiological Society, for example, could suffer a reduced income. That the only argument that I know against open access. But sadly it is something that we are going to have to learn to live with.

[…] show that #altmetrics trivialises and corrupts science is to look at high scoring papers http://www.dcscience.net/?p=6369 […]

[…] you think that bibliometrics are controversial then altmetrics have provided some of the juiciest criticisms of all, being described as attention metrics. Yes, altmetrics as a “score” can be easily […]

[…] by silly bibliometric methods has contributed to this harm. Of all the proposed methods, altmetrics is demonstrably the most idiotic. Yet some vice-chancellors have failed to understand […]

[…] Why you should ignore altmetrics and other bibliometric nightmares […]

[…] voices are dismissive. At best. See this title from David Colquhoun and Andrew Plested “Why you should ignore altmetrics and other bibliometric nightmares“. Or even read it – it’s hard to deny that most folk who retweet the […]